The law that entropy always increases, holds, I think, the supreme position among the laws of Nature. If someone points out to you that your pet theory of the universe is in disagreement with Maxwell’s equations — then so much the worse for Maxwell’s equations. If it is found to be contradicted by observation — well, these experimentalists do bungle things sometimes. But if your theory is found to be against the second law of thermodynamics I can give you no hope; there is nothing for it but to collapse in deepest humiliation.

– Eddington

My favorite part of physics is statistical mechanics.

This wasn’t the case when it was first presented to me – it seemed fairly ugly and complicated compared to the elegant and deep formulations of classical mechanics and quantum mechanics. There were too many disconnected rules and special cases messily bundled together to match empirical results. Unlike the rest of physics, I failed to see the same sorts of deep principles motivating the equations we derived.

Since then I’ve realized that I was completely wrong. I’ve come to appreciate it as one of the deepest parts of physics I know, and mentally categorize it somewhere in the intersection of physics, math, and philosophy.

This post is an attempt to convey how statistical mechanics connects these fields, and to show concisely how some of the standard equations of statistical mechanics arise out of deep philosophical principles.

***

The fundamental goal of statistical mechanics is beautiful. It answers the question “How do we apply our knowledge of the universe on the tiniest scale to everyday life?”

In doing so, it bridges the divide between questions about the fundamental nature of reality (What is everything made of? What types of interactions link everything together?) and the types of questions that a ten-year old might ask (Why is the sky blue? Why is the table hard? What is air made of? Why are some things hot and others cold?).

Statistical mechanics peeks at the realm of quarks and gluons and electrons, and then uses insights from this realm to understand the workings of the world on a scale a factor of 1021 larger.

Wilfrid Sellars described philosophy as an attempt to reconcile the manifest image (the universe as it presents itself to us, as a world of people and objects and purposes and values), and the scientific image (the universe as revealed to us by scientific inquiry, empty of purpose, empty of meaning, and animated by simple exact mathematical laws that operate like clockwork). This is what I see as the fundamental goal of statistical mechanics.

What is incredible to me is how elegantly it manages to succeed at this. The universality and simplicity of the equations of statistical mechanics are astounding, given the type of problem we’re dealing with. Physicists would like to say that once they’ve figured out the fundamental equations of physics, then we understand the whole universe. Rutherford said that “all science is either physics or stamp collecting.” But you try to take some laws that tell you how two electrons interact, and then answer questions about how 1023 electrons will behave when all crushed together.

The miracle is that we can do this, and not only can we do it, but we can do it with beautiful, simple equations that are loaded with physical insight.

There’s an even deeper connection to philosophy. Statistical mechanics is about epistemology. (There’s a sense in which all of science is part of epistemology. I don’t mean this. I mean that I think of statistical mechanics as deeply tied to the philosophical foundations of epistemology.)

Statistical mechanics doesn’t just tell us what the world should look like on the scale of balloons and oceans and people. Some of the most fundamental concepts in statistical mechanics are ultimately about our state of knowledge about the world. It contains precise laws telling us what we can know about the universe, what we should believe, how we should deal with uncertainty, and how this uncertainty is structured in the physical laws.

While the rest of physics searches for perfect objectivity (taking the “view from nowhere”, in Nagel’s great phrase), statistical mechanics has one foot firmly planted in the subjective. It is an epistemological framework, a theory of physics, and a piece of beautiful mathematics all in one.

***

Enough gushing.

I want to express some of these deep concepts I’ve been referring to.

First of all, statistical mechanics is fundamentally about probability.

It accepts that trying to keep track of the positions and velocities of 1023 particles all interacting with each other is futile, regardless of how much you know about the equations guiding their motion.

And it offers a solution: Instead of trying to map out all of the particles, let’s course-grain our model of the universe and talk about the likelihood that a given particle is in a given position with a given velocity.

As soon as we do this, our theory is no longer just about the universe in itself, it is also about us, and our model of the universe. Equations in statistical mechanics are not only about external objective features of the world; they are also about properties of the map that we use to describe it.

This is fantastic and I think really under-appreciated. When we talk about the results of the theory, we must keep in mind that these results must be interpreted in this joint way. I’ve seen many misunderstandings arise from failures of exactly this kind, like when people think of entropy as a purely physical quantity and take the second law of thermodynamics to be solely a statement about the world.

But I’m getting ahead of myself.

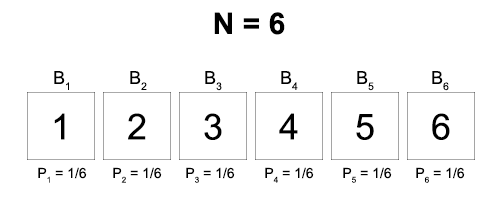

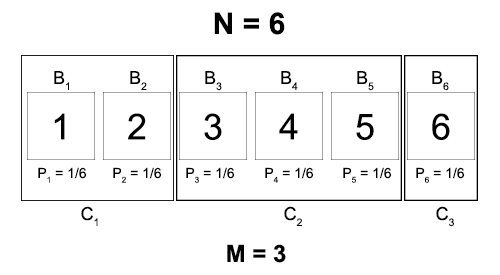

Statistical mechanics is about probability. So if we have a universe consisting of N = 1080 particles, then we will create a function P that assigns a probability to every possible position for each of these particles at a given moment:

P(x1, y1, z1, x2, y2, z2, …, xN, yN, zN)

P is a function of 3•1080 values… this looks super complicated. Where’s all this elegance and simplicity I’ve been gushing about? Just wait.

The second fundamental concept in statistical mechanics is entropy. I’m going to spend way too much time on this, because it’s really misunderstood and really important.

Entropy is fundamentally a measure of uncertainty. It takes in a model of reality and returns a numerical value. The larger this value, the more coarse-grained your model of reality is. And as this value approaches zero, your model approaches perfect certainty.

Notice: Entropy is not an objective feature of the physical world!! Entropy is a function of your model of reality. This is very very important.

So how exactly do we define the entropy function?

Say that a masked inquisitor tells you to close your eyes and hands you a string of twenty 0s and 1s. They then ask you what your uncertainty is about the exact value of the string.

If you don’t have any relevant background knowledge about this string, then you have no reason to suspect that any letter in the string is more likely to be a 0 than a 1 or vice versa. So perhaps your model places equal likelihood in every possible string. (This corresponds to a probability of ½ • ½ • … • ½ twenty times, or 1/220).

The entropy of this model is 20.

Now your inquisitor allows you to peek at only the first number in the string, and you see that it is a 1.

By the same reasoning, your model is now an equal distribution of likelihoods over all strings that start with 1.

The entropy of this model? 19.

If now the masked inquisitor tells you that he has added five new numbers at the end of your string, the entropy of your new model will be 24.

The idea is that if you are processing information right, then every time you get a single bit of information, your entropy should decrease by exactly 1. And every time you “lose” a bit of information, your entropy should increase by exactly 1.

In addition, when you have perfect knowledge, your entropy should be zero. This means that the entropy of your model can be thought of as the number of pieces of binary information you would have to receive to have perfect knowledge.

How do we formalize this?

Well, your initial model (back when there were 20 numbers and you had no information about any of them) gave each outcome a probability of P = 1/220. How do we get a 20 out of this? Simple!

Entropy = S = log2(1/P)

(Yes, entropy is denoted by S. Why? Don’t ask me, I didn’t invent the notation! But you’ll get used to it.)

We can check if this formula still works out right when we get new information. When we learned that the first number was a 1, half of our previous possibilities disappeared. Given that the others are all still equally likely, our new probabilities for each should double from 1/220 to 1/219.

And S = log2(1/(1/219)) = log2(219) = 19. Perfect!

What if you now open your eyes and see the full string? Well now your probability distribution is 0 over all strings except the one you see, which has probability 1.

So S = log2(1/1) = log2(1) = 0. Zero entropy corresponds to perfect information.

This is nice, but it’s a simple idealized case. What if we only get partial information? What if the masked stranger tells you that they chose the numbers by running a process that 80% of the time returns 0 and 20% of the time returns 1, and you’re fifty percent sure they’re lying?

In general, we want our entropy function to be able to handle models more sophisticated than just uniform distributions with equal probabilities for every event. Here’s how.

We can write out any arbitrary probability distribution over N binary events as follows:

(P1, P2, …, PN)

As we’ve seen, if they were all equal then we would just find the entropy according to previous equation: S = log2(1/P).

But if they’re not equal, then we can just find the weighted average! In other words:

S = mean(log2(1/P)) =∑ Pn log2(1/Pn)

We can put this into the standard form by noting that log(1/P) = -log(P).

And we have our general definition of entropy!

For discrete probabilities: S = – ∑ Pn log Pn

For continuous probabilities: S = – ∫ P(x) log P(x) dx

(Aside: Physicists generally use a natural logarithm instead of log2 when they define entropy. This is just a difference in convention: e pops up more in physics and 2 in information theory. It’s a little weird, because now when entropy drops by 1 this means you’ve excluded 1/e of the options, instead of ½. But it makes equations much nicer.)

I’m going to spend a little more time talking about this, because it’s that important.

We’ve already seen that entropy is a measure of how much you know. When you have perfect and complete knowledge, your model has entropy zero. And the more uncertainty you have, the more entropy you have.

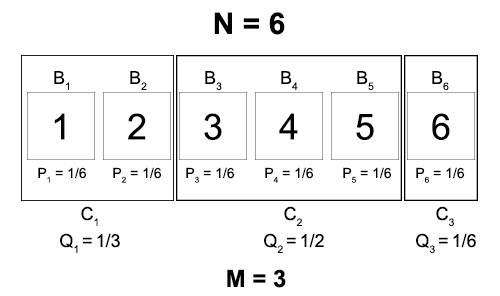

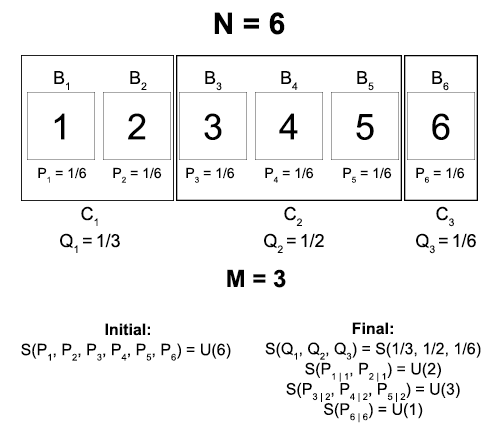

You can visualize entropy as a measure of the size of your probability distribution. Some examples you can calculate for yourself using the above equations:

Roughly, when you double the “size” of your probability distribution, you increase its entropy by 1.

But what does it mean to double the size of your probability distribution? It means that there are two times as many possibilities as you initially thought – which is equivalent to you losing one piece of binary information! This is exactly the connection between these two different ways of thinking about entropy.

Third: (I won’t name it yet so as to not ruin the surprise). This is so important that I should have put it earlier, but I couldn’t have because I needed to introduce entropy first.

So I’ve been sneakily slipping in an assumption throughout the last paragraphs. This is that when you don’t have any knowledge about the probability of a set of events, you should act as if all events are equally likely.

This might seem like a benign assumption, but it’s responsible for god-knows how many hours of heated academic debate. Here’s the problem: sure it seems intuitive to say that 0 and 1 are equally likely. But that itself is just one of many possibilities. Maybe 0 comes up 57% of the time, or maybe 34%. It’s not like you have any knowledge that tells you that 0 and 1 are objectively equally likely, so why should you favor that hypothesis?

Statistical mechanics answers this by just postulating a general principle: Look at the set of all possible probability distributions, calculate the entropy of each of them, and then choose the one with the largest entropy.

In cases where you have literally no information (like our earlier inquisitor-string example), this principle becomes the principle of indifference: spread your credences evenly among the possibilities. (Prove it yourself! It’s a fun proof.)

But as a matter of fact, this principle doesn’t only apply to cases where you have no information. If you have partial or incomplete information, you apply the exact same principle by looking at the set of probability distributions that are consistent with this information and maximizing entropy.

This principle of maximum entropy is the foundational assumption of statistical mechanics. And it is a purely epistemic assumption. It is a normative statement about how you should rationally divide up your credences in the absence of information.

Said another way, statistical mechanics prescribes an answer to the problem of the priors, the biggest problem haunting Bayesian epistemologists. If you want to treat your beliefs like probabilities and update them with evidence, you have to have started out with an initial level of belief before you had any evidence. And what should that prior probability be?

Statistical mechanics says: It should be the probability that maximizes your entropy. And statistical mechanics is one of the best-verified and most successful areas of science. Somehow this is not loudly shouted in the pages of every text on Bayesianism.

There’s much more to say about this, but I’ll set it aside for the moment.

***

So we have our setup for statistical mechanics.

- Coarse-grain your model of reality by constructing a probability distribution over all possible microstates of the world.

- Construct this probability distribution according to the principle of maximum entropy.

Okay! So going back to our world of N = 1080 particles jostling each other around, we now know how to construct our probability distribution P(x1, …, xN). (I’ve made the universe one-dimensional for no good reason except to pretty it up – everything I say follows exactly the same if I left it in 3D. I’ll also start writing the set of all N coordinates as X, again for prettiness.)

What probability distribution maximizes S = – ∫ P logP dX?

We can solve this with the method of Lagrange multipliers:

∂P [ P logP + λP ] = 0,

where λ is chosen to satisfy: ∫ P dX = 1

This is such a nice equation and you should do yourself a favor and learn it, because I’m not going to explain it (if I explained everything, this post would become a textbook!).

But it essentially maximizes the value of S, subject to the constraint that the total probability is 1. When we solve it we find:

P(x1, …, xN) = 1/VN, where V is the volume of the universe

Remember earlier when I said to just wait for the probability equation to get simple?

Okay, so this is simple, but it’s also not very useful. It tells us that every particle has an equal probability of being in any equally sized region of space. But we want to know more. Like, are the higher energy particles distributed differently than the lower energy?

The great thing about statistical mechanics is that if you want a better model, you can just feed in more information to your distribution.

So let’s say we want to find the probability distribution, given two pieces of information: (1) we know the energy of every possible configuration of particles, and (2) the average total energy of the universe is fixed.

That is, we have a function E(x1, …, xN) that tells us energies, and we know that the total energy E = ∫ P(x1, …, xN)•E(x1, …, xN) dX is fixed.

So how do we find our new P? Using the same method as before:

∂P [ P logP + λP + βEP ] = 0,

where λ is chosen to satisfy: ∫ P dX = 1

and β is chosen to satisfy: ∫ P•E dX = E

This might look intimidating, but it’s really not. I’ll write out how to solve this:

∂P [P logP + λP + βEP) ]

= logP + 1 + λ + βE = 0

So P = e-(1+λ) • e-βE

Renaming our first term, we get:

P(X) = 1/Z • e-βE(X)

This result is called the Boltzmann distribution, and it’s one of the incredibly important must-know equations of statistical mechanics. The amount of physics you can do with just this one equation is staggering. And we got it by just adding conservation of energy to the principle of maximum entropy.

Maybe you’re disturbed by the strange new symbols Z and β that have appeared in the equation. Don’t fear! Z is simply a normalization constant: it’s there to keep the probability of the total distribution at 1. We can calculate it explicitly:

Z = ∫ e-βE dX

And β is really interesting. Notice that β came into our equations because we had to satisfy this extra constraint about a fixed total energy. Is there some nice physical significance to this quantity?

Yes, very much so. β is what we humans like to call ‘temperature’, or more precisely, inverse temperature.

β = 1/T

While avoiding the math, I can just say the following: Temperature is defined to be the change in the energy of a system when you change its entropy a little bit. (This definition is much more general than the special case definition of temperature as average kinetic energy)

And it turns out that when you manipulate the above equations a little bit, you see that ∂SE = 1/β = T.

So we could rewrite our probability distribution as follows:

P(X) = 1/Z • e-E(X)/T

Feed in your fundamental laws of physics to the energy function, and you can see the distribution of particles across the universe!

Let’s just look at the basic properties of this equation. First of all, we can see that the larger E(X)/T becomes, the smaller the probability of a particle being in X becomes. This corresponds both to particles scattering away from high-energy regions and to less densely populated systems having lower temperatures.

And the smaller E(X)/T, the larger P(X). This corresponds to particles densely clustering in low-energy areas, and dense clusters of particles having high temperatures.

There are too many other things I could say about this equation and others, and this post is already way too long. I want to close with a final note about the nature of entropy.

I said earlier that entropy is entirely a function of your model of reality. The universe doesn’t have an entropy. You have a model of the universe, and that model has an entropy. Regardless of what physical reality is like, if I hand you a model, you can tell me its entropy.

But at the same time, models of reality are linked to the nature of the physical world. So for instance, a very simple and predictable universe lends itself to very precise and accurate models of reality, and thus to lower-entropy models. And a very complicated and chaotic universe lends itself to constant loss of information and low-accuracy models, and thus to higher entropy.

It is this second world that we live in. Due to the structure of the universe, information is constantly being lost to us at enormous rates. Systems that start out simple eventually spiral off into chaotic and unpredictable patterns, and order in the universe is only temporary.

It is in this sense that statements about entropy are statements about physical reality. And it is for this reason that entropy always increases.

In principle, an omnipotent and omniscient agent could track the positions of all particles at all times, and this agent’s model of the universe would be always perfectly accurate, with entropy zero. For this agent, the entropy of the universe would never rise.

And yet for us, as we look at the universe, we seem to constantly and only see entropy-increasing interactions.

This might seem counterintuitive or maybe even impossible to you. How could the entropy rise to one agent and stay constant for another?

Imagine an ice cube sitting out on a warm day. The ice cube is in a highly ordered and understandable state. We could sit down and write out a probability distribution, taking into account the crystalline structure of the water molecules and the shape of the cube, and have a fairly low-entropy and accurate description of the system.

But now the ice cube starts to melt. What happens? Well, our simple model starts to break down. We start losing track of where particles are going, and having trouble predicting what the growing puddle of water will look like. And by the end of the transition, when all that’s left is a wide spread-out wetness across the table, our best attempts to describe the system will inevitably remain higher-entropy than what we started with.

Our omniscient agent looks at the ice cube and sees all the particles exactly where they are. There is no mystery to him about what will happen next – he knows exactly how all the water molecules are interacting with one another, and can easily determine which will break their bonds first. What looked like an entropy-increasing process to us was an entropy-neutral process to him, because his model never lost any accuracy.

We saw the puddle as higher-entropy, because we started doing poorly at modeling it. And our models started performing poorly, because the system got too complex for our models.

In this sense, entropy is not just a physical quantity, it is an epistemic quantity. It is both a property of the world and a property of our model of the world. The statement that the entropy of the universe increases is really the statement that the universe becomes harder for our models to compute over time.

Which is a really substantive statement. To know that we live in the type of universe that constantly increases in entropy is to know a lot about the way that the universe operates.

More reading here if you’re interested!