In front of you are two envelopes, each containing some unknown amount of money. You know that one of the envelopes has twice the amount of money of the other, but you’re not sure which one that is and can only take one of the two. You choose one at random, and start to head out, when a thought goes through your head:

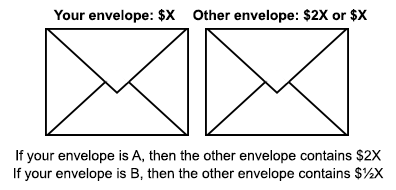

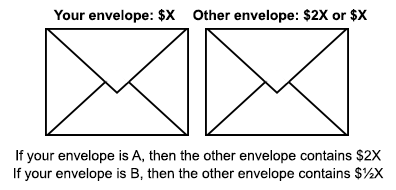

You: “Hmm, let me think about this. I just took an introductory decision theory class, and they said that you should always make decisions that maximize your expected utility. So let’s see… Either I have the envelope with less money or not. If I do, then I stand to double my money by switching. And if I don’t, then I only lose half my money. Since the possible gain outweighs the possible loss, and the two are equally likely, I should switch!”

Excited by your good sense in deciding to consult your decision theory knowledge, you run back and take the envelope on the table instead. But now, as you’re walking towards the door, another thought pops into your head:

You: “Wait a minute. I currently have some amount of money in my hands, and I still don’t know whether I got the envelope with more or less money. I haven’t gotten any new information in the past few moments, so the same argument should apply… if I call the amount in my envelope Y, then I gain $2Y by switching if I have the lesser envelope, and only lose $½Y by switching if I have the greater envelope. So… I should switch again, I guess!”

Slightly puzzled by your own apparently absurd behavior, but reassured by the memories of your decision theory professor’s impressive-sounding slogans about instrumental rationality and maximizing expected utility, you walk back to the table and grab the envelope you had initially chosen, and head for the door.

But a new argument pops into your head…

You see where this is going.

What’s going on here? It appears that by a simple application of decision theory, you are stuck switching envelopes ad infinitum, foolishly thinking that as you do so, your expected value is skyrocketing. Has decision theory gone crazy?

***

This is the wonderful two-envelopes paradox. It’s one of my favorite paradoxes of decision theory, because it starts from what appear to be incredibly minimal assumptions and produces obviously outlandish behavior.

If you’re not convinced yet that this is what standard decision theory tells you to do, let me formalize the argument and write out the exact calculations that lead to the decision to switch.

Call the envelope with less money “Envelope A”

Call the envelope with more money “Envelope B”

Call the envelope you are holding “Envelope E”

X = the amount of money in your envelope

P(E is A) = P(E is B) = ½

If E is A & you switch, then you get $2X

If E is B & you switch, then you get $½X

If E is A & you stay, then you get $X

If E is B & you stay, then you get $X

EU(switch) = P(E is A) · 2X + P(E is B) · ½X = 1¼ X

EU(stay) = P(E is A) · X + P(E is B) · X = X

So, EU(switch) > EU(stay)!

If you think that the conclusion is insane, then either there’s an error somewhere in this argument, or we’ve proven that decision theory is insane.

It’s easy to put forward additional arguments for why the expected utility should be the same for switching and staying, but this still leaves the nagging question of why this particular argument doesn’t work. The ultimate reason is wonderfully subtle and required several hours of agonizing for me to grasp.

I suggest you stop and analyze the argument a little bit before reading on – try to figure out for yourself what’s wrong.

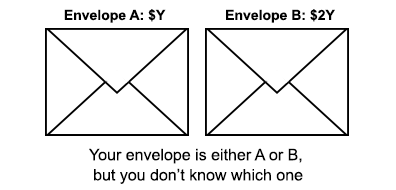

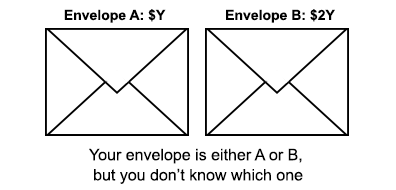

Let me present the correct line of reasoning for comparison:

Call the envelope with less money “Envelope A”

Call the envelope with more money “Envelope B”

Call the envelope you are holding “Envelope E”

Label the amount of money in Envelope A = Y.

Then the amount of money in Envelope B = 2Y.

P(E is A) = P(E is B) = ½

If E is A and you switch, then you get $2Y

If E is B and you switch, then you get $Y

If E is A and you stay, then you get $Y

If E is B and you stay, then you get $2Y

EU(switch) = P(E is A) · 2Y + P(E is B) · Y = 1½ Y

EU(stay) = P(E is A) · Y + P(E is B) · 2Y = 1½ Y

So EU(switch) = EU(stay)

This gives us the right answer, but the only apparent difference between this and what we did before is which quantity we give a name – X was the money in your envelope in the first argument, and Y is the money in the lesser envelope in this one. How could the answer depend on this apparently irrelevant difference?

***

Without further ado, let me diagnose the argument at the start of this post.

The fundamental mistake that this argument makes is that it treats the probability that you have the lesser envelope as if it is independent of the amount of money that you have in your hands. This is only the case if the amount of money in your envelope is irrelevant to whether you have the lesser envelope. But the amount of money in your hand is highly relevant information.

This may sound weird. After all, you chose the envelope at random, and shouldn’t the principle of maximum entropy prescribe that two equivalent envelopes are equally likely to be chosen? How could the unknown amount of money you’re holding have any sway over which one you were more likely to choose?

The answer is that the envelopes aren’t equivalent for any given amount of money in your hand. In general, given that you end up holding an envelope with $X, the chance that this is the lesser quantity is affected by the value of X.

Suppose, for example, that you know that the envelopes contain $1 and $2. Now in your mental model of the envelope in your hand, you see an equal chance of it containing $1 and $2. But now whether your envelope is the lesser or greater one is clearly not independent of the amount of money in your envelope. If you’re holding $1, then you know that you have the lesser envelope. And if you’re holding $2, then you know that you have the greater envelope.

In general, your prior probability over the possible amounts of money in your envelope will be relevant to the chance that you are holding the lesser or greater envelopes.

If you think that the envelopes are much more likely to contain small numbers, then given that the amount of money in your hand is large, you are much more likely to be holding the envelope with more money. Or if you think that the person stuffing the envelopes had only a certain fixed upper amount of cash that he was willing to put into the envelopes, then for some possible amounts of money in your envelope, you will know with certainty that it is the larger envelope.

Regardless, we’ll see that for any distribution of probabilities over the money in the envelopes, proper calculation of the expected utility will inevitably end up zero.

Here’s the sketch of the proof:

Stipulations:

Call the envelope with less money “Envelope A”

Call the envelope you are holding “Envelope E”

P(E is A) = P(E is B) = ½

If A has $x, then B has $2x

P(A has $x) = f(x) for some normalized function f(x)

The function f(x) represents your prior probability distribution over the possible amounts of money in A. We can infer your probability distribution over the possible amounts of money in B from the fact that B has double the money of A.

P(B has $x) = ½ P(A = $½ x) = ½ · f(½ x)

The ½ comes from the fact that we’ve stretched out our distribution by a factor of 2 and must renormalize it to keep our total probability equal to 1.

Now we’ll calculate the expected utility of switching, given that our envelope has some amount of money $x in it, and average over all possible values of x.

∆EU = < ∆EU given that E has $x >

= ∫ P(E has $x) · (∆EU given that E has $x) dx

Since this calculation will have several components, I’ll start color-coding them.

Next we’ll split up the calculation into the expected utility of switching (brown) and the expected utility of staying (blue).

∆EU = ∫ P(E has $x) · {EU(switch | E has $x) – EU(stay | E has $x)} dx

Our final subdivision of possible worlds will be regarding whether you’re holding the envelope with less or more money.

∆EU = ∫ P(E has $x) · { P(E is A | E has $x) · U(switch to B) + P(E is B | E has $x) · U(switch to A) – P(E is A | E has $x) · U(stay with A) + P(E is B | E has $x) · U(stay with B) } dx

We can rearrange the terms and color code them by whether they refer to the world in which you’re holding the lesser envelope (red) or the world in which you’re holding the greater envelope (green).

∆EU = ∫ { P(E has $x and E is A) · (U(switch to B) – U(stay with A)) + P(E has $x and E is B) · (U(switch to A) – U(stay with B)) } dx

= ∫ { P(A has $x) · P(E is A) · (2x – x) + P(B has $x) · P(E is B) · (½ x – x) } dx

= ∫ { f(x) · ½ x – ½ f(½ x) · ¼ x } dx

= ½ ∫ x f(x) dx – ½ ∫ (½ x) · f(½ x) · d(½ x)

= ½ ∫ x f(x) dx – ½ ∫ x’ f(x’) dx’

= 0

And we’re done!

So if this is the right way to do the calculation we attempted at the beginning, then where did we go wrong the first? The key is that we considered the unconditional probabilities P(E is A) and P(E is B) instead of the conditional probabilities P(E is A | E has $x) and P(E is B | E has $x).

This made the calculations more complicated, but was necessary. Why? Well, the assumption of independence of the value of your envelope and whether it is the lower or higher valued envelope is logically incoherent.

Proof in words: Suppose that your envelope’s value was independent of whether it is the lower or higher envelope. This means that for any value $X, it is equally likely that the other envelope contains $2X and that it contains $½X. We can write this condition as follows: P(other envelope has 2X) = P(other envelope has ½X) for all X. But there are no normalized distributions that satisfy this property! For any amount of probability mass in a given region [X, X+∆], there must also be at least as much probability mass in the region [4X, 4X+4∆]. Thus if any region has any finite probability mass, then that mass must be repeated an infinite number of times, meaning the distribution can’t be normalized! Proof by contradiction.

Even if we imagined some cap on the total value of a given envelope (say $1 million), we still don’t get away. Because now the value of your envelope is no longer independent of whether it is the lower or higher envelope! If the value of the envelope in your hands is $999,999, then you know for sure that you must have the larger of the two envelopes.

If the amount of money in your hands and the chance that you have the lesser envelope are independent, then you are imagining an unnormalizable prior. And if they are dependent, then the argument we started with must be amended to the colorful argument.

It’s not that at any point you get to look inside your envelope and see how much money is inside. It’s simply that you cannot talk about the probability of your envelope being the lesser of the two as if it is independent of the the amount of money you’re holding. And our starting argument did exactly that – it assumed that you were equally likely to have the smaller and larger envelope, regardless of how much money you held.

So the problem with our starting argument is wonderfully subtle. By the very framing of the statement “It’s equally likely that the other envelope contains $2X and $½X if my envelope contains $X,” we are committing ourselves to an impossibility: a prior probability with infinite total probability!