TL;DR

- Hume sort of wrecked metaphysics. This inspired Kant to try and save it.

- Hume thought that terms were only meaningful insofar as they were derived from experience.

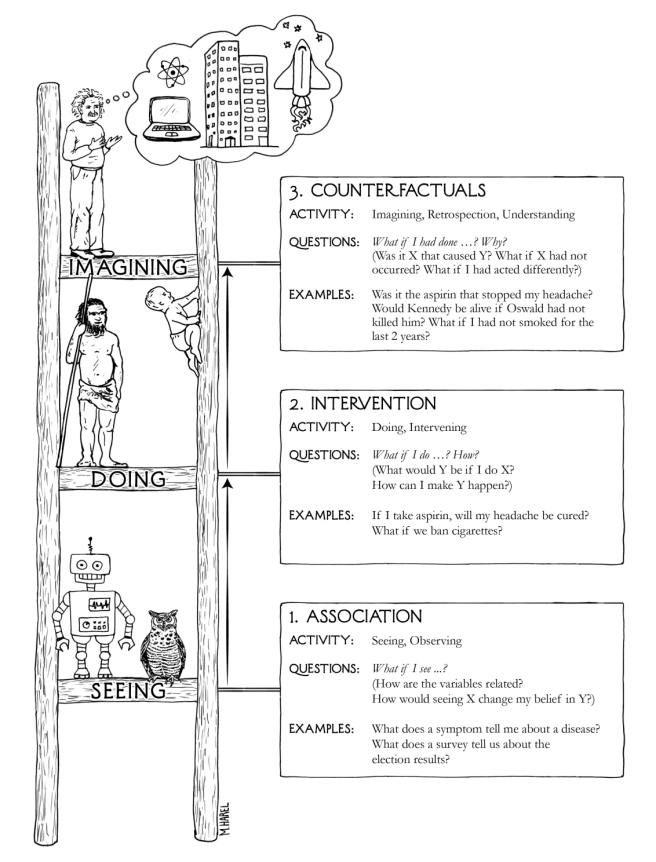

- We never actually experience necessary connections between events, we just see correlations. So Hume thought that the idea of causality as necessary connection is empty and confused, and that all our idea of causality really amounts to is correlation.

- Kant didn’t like this. He wanted to PROTECT causality. But how??

- Kant said that metaphysical knowledge was both a priori and substantive, and justified this by describing these things called pure intuitions and pure concepts.

- Intuitions are representations of things (like sense perceptions). Pure intuitions are the necessary preconditions for us to represent things at all.

- Concepts are classifications of representations (like “red”). Pure concepts are the necessary preconditions underlying all classifications of representations.

- There are two pure intuitions (space and time) and twelve pure concepts (one of which is causality).

- We get substantive a priori knowledge by referring to pure intuitions (mathematics) or pure concepts (laws of nature, metaphysics).

- Yay! We saved metaphysics!

(Okay, now on to the actual essay. This was not originally written for this blog, which is why it’s significantly more formal than my usual fare.)

***

David Hume’s Enquiry Into Human Understanding stands out as a profound and original challenge to the validity of metaphysical knowledge. Part of the historical legacy of this work is its effect on Kant, who describes Hume as being responsible for “[interrupting] my dogmatic slumber and [giving] my investigations in the field of speculative philosophy a completely different direction.” Despite the great inspiration that Kant took from Hume’s writing, their thinking on many matters is diametrically opposed. A prime example of this is their views on causality.

Hume’s take on causation is famously unintuitive. He gives a deflationary account of the concept, arguing that the traditional conception lacks a solid epistemic foundation and must be replaced by mere correlation. To understand this conclusion, we need to back up and consider the goal and methodology of the Enquiry.

He starts with an appeal to the importance of careful and accurate reasoning in all areas of human life, and especially in philosophy. In a beautiful bit of prose, he warns against the danger of being overwhelmed by popular superstition and religious prejudice when casting one’s mind towards the especially difficult and abstruse questions of metaphysics.

But this obscurity in the profound and abstract philosophy is objected to, not only as painful and fatiguing, but as the inevitable source of uncertainty and error. Here indeed lies the most just and most plausible objection against a considerable part of metaphysics, that they are not properly a science, but arise either from the fruitless efforts of human vanity, which would penetrate into subjects utterly inaccessible to the understanding, or from the craft of popular superstitions, which, being unable to defend themselves on fair ground, raise these entangling brambles to cover and protect their weakness. Chased from the open country, these robbers fly into the forest, and lie in wait to break in upon every unguarded avenue of the mind, and overwhelm it with religious fears and prejudices. The stoutest antagonist, if he remit his watch a moment, is oppressed. And many, through cowardice and folly, open the gates to the enemies, and willingly receive them with reverence and submission, as their legal sovereigns.

In less poetic terms, Hume’s worry about metaphysics is that its difficulty and abstruseness makes its practitioners vulnerable to flawed reasoning. Even worse, the difficulty serves to make the subject all the more tempting for “each adventurous genius[, who] will still leap at the arduous prize and find himself stimulated, rather than discouraged by the failures of his predecessors, while he hopes that the glory of achieving so hard an adventure is reserved for him alone.”

Thus, says Hume, the only solution is “to free learning at once from these abstruse questions [by inquiring] seriously into the nature of human understanding and [showing], from an exact analysis of its powers and capacity, that it is by no means fitted for such remote and abstruse questions.”

Here we get the first major divergence between Kant and Hume. Kant doesn’t share Hume’s eagerness to banish metaphysics. His Prolegomena To Any Future Metaphysics and Critique of Pure Reason are attempts to find it a safe haven from Hume’s attacks. However, while Kant might not be similarly constituted to Hume in this way, he does take Hume’s methodology very seriously. He states in the preface to the Prolegomena that “since the origin of metaphysics as far as history reaches, nothing has ever happened which could have been more decisive to its fate than the attack made upon it by David Hume.” Many of the principles which Hume derives, Kant agrees with wholeheartedly, making the task of shielding metaphysics even harder for him.

With that understanding of Hume’s methodology in mind, let’s look at how he argues for his view of causality. We’ll start with a distinction that is central to Hume’s philosophy: that between ideas and impressions. The difference between the memory of a sensation and the sensation itself is a good example. While the memory may mimic or copy the sensation, it can never reach its full force and vivacity. In general, Hume suggests that our experiences fall into two distinct categories, separated by a qualitative gap in liveliness. The more lively category he calls impressions, which includes sensory perceptions like the smell of a rose or the taste of wine, as well as internal experiences like the feeling of love or anger. The less lively category he refers to as thoughts or ideas. These include memories of impressions as well as imagined scenes, concepts, and abstract thoughts.

With this distinction in hand, Hume proposes his first limit on the human mind. He claims that no matter how creative or original you are, all of your thoughts are the product of “compounding, transposing, augmenting, or diminishing the materials afforded us by the senses and experiences.” This is the copy principle: all ideas are copies of impressions, or compositions of simpler ideas that are in turn copies of impressions.

Hume turns this observation of the nature of our mind into a powerful criterion of meaning. “When we entertain any suspicion that a philosophical term is employed without any meaning or idea (as is but too frequent), we need but enquire, From what impression is that supposed idea derived? And if it be impossible to assign any, this will serve to confirm our suspicion.”

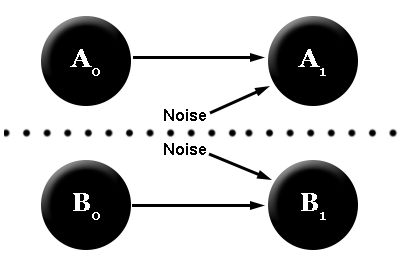

This criterion turns out to be just the tool Hume needs in order to establish his conclusion. He examines the traditional conception of causation as a necessary connection between events, searches for the impressions that might correspond to this idea, and, failing to find anything satisfactory, declares that “we have no idea of connection or power at all and that these words are absolutely without any meaning when employed in either philosophical reasonings or common life.” His primary argument here is that all of our observations are of mere correlation, and that we can never actually observe a necessary connection.

Interestingly, at this point he refrains from recommending that we throw out the term causation. Instead he proposes a redefinition of the term, suggesting a more subtle interpretation of his criterion of meaning. Rather than eliminating the concept altogether upon discovering it to have no satisfactory basis in experience, he reconceives it in terms of the impressions from which it is actually formed. In particular, he argues that our idea of causation is really based on “the connection which we feel in the mind, this customary transition of the imagination from one object to its usual attendant.”

Here Hume is saying that humans have a rationally unjustifiable habit of thought where, when we repeatedly observe one type of event followed by another, we begin to call the first a cause and the second its effect, and we expect that future instances of the cause will be followed by future instances of the effect. Causation, then, is just this constant conjunction between events, and our mind’s habit of projecting the conjunction into the future. We can summarize all of this in a few lines:

Hume’s denial of the traditional concept of causation

- Ideas are always either copies of impressions or composites of simpler ideas that are copies of impressions.

- The traditional conception of causation is neither of these.

- So we have no idea of the traditional conception of causation.

Hume’s reconceptualization of causation

- An idea is the idea of the impression that it is a copy of.

- The idea of causation is copied from the impression of constant conjunction.

- So the idea of causation is just the idea of constant conjunction.

There we have Hume’s line of reasoning, which provoked Kant to examine the foundations of metaphysics anew. Kant wanted to resist Hume’s dismissal of the traditional conception of causation, while accepting that our sense perceptions reveal no necessary connections to us. Thus his strategy was to deny the Copy Principle and give an account of how we can have substantive knowledge that is not ultimately traceable to impressions. He does this by introducing the analytic/synthetic distinction and the notion of a priori synthetic knowledge.

Kant’s original definition of analytic judgments is that they “express nothing in the predicate but what has already been actually thought in the concept of the subject.” This suggests that the truth value of an analytic judgment is determined by purely the meanings of the concepts in use. A standard example of this is “All bachelors are unmarried.” The truth of this statement follows immediately just by understanding what it means, as the concept of bachelor already contains the predicate unmarried. Synthetic judgments, on the other hand, are not fixed in truth value by merely the meanings of the concepts in use. These judgments amplify our knowledge and bring us to genuinely new conclusions about our concepts. An example: “The President is ornery.” This certainly doesn’t follow by definition; you’d have to go out and watch the news to realize its truth.

We can now put the challenge to metaphysics slightly differently. Metaphysics purports to be discovering truths that are both necessary (and therefore a priori) as well as substantive (adding to our concepts and thus synthetic). But this category of synthetic a priori judgments seems a bit mysterious. Evidently, the truth values of such judgments can be determined without referring to experience, but can’t be determined by merely the meanings of the relevant concepts. So apparently something further is required besides the meanings of concepts in order to make a synthetic a priori judgment. What is this thing?

Kant’s answer is that the further requirement is pure intuition and pure concepts. These terms need explanation.

Pure Intuitions

For Kant, an intuition is a direct, immediate representation of an object. An obvious example of this is sense perception; looking at a cup gives you a direct and immediate representation of an object, namely, the cup. But pure intuitions must be independent of experience, or else judgments based on them would not be a priori. In other words, the only type of intuition that could possibly be a priori is one that is present in all possible perceptions, so that its existence is not contingent upon what perceptions are being had. Kant claims that this is only possible if pure intuitions represent the necessary preconditions for the possibility of perception.

What are these necessary preconditions? Kant famously claimed that the only two are space and time. This implies that all of our perceptions have spatiotemporal features, and indeed that perception is only possible in virtue of the existence of space and time. It also implies, according to Kant, that space and time don’t exist outside of our minds! Consider that pure intuitions exist equally in all possible perceptions and thus are independent of the actual properties of external objects. This independence suggests that rather than being objective features of the external world, space and time are structural features of our minds that frame all our experiences.

This is why Kant’s philosophy is a species of idealism. Space and time get turned into features of the mind, and correspondingly appearances in space and time become internal as well. Kant forcefully argues that this view does not make space and time into illusions, saying that without his doctrine “it would be absolutely impossible to determine whether the intuitions of space and time, which we borrow from no experience, but which still lie in our representation a priori, are not mere phantasms of our brain.”

The pure intuitions of space and time play an important role in Kant’s philosophy of mathematics: they serve to justify the synthetic a priori status of geometry and arithmetic. When we judge that the sum of the interior angles of a triangle is 180º, for example, we do so not purely by examining the concepts triangle, sum, and angle. We also need to consult the pure intuition of space! And similarly, our affirmations of arithmetic truths rely upon the pure intuition of time for their validity.

Pure Concepts

Pure intuition is only one part of the story. We don’t just perceive the world, we also think about our perceptions. In Kant’s words, “Thoughts without content are empty; intuitions without concepts are blind. […] The understanding cannot intuit anything, and the senses cannot think anything. Only from their union can cognition arise.” As pure intuitions are to perceptions, pure concepts are to thought. Pure concepts are necessary for our empirical judgments, and without them we could not make sense of perception. It is this category in which causality falls.

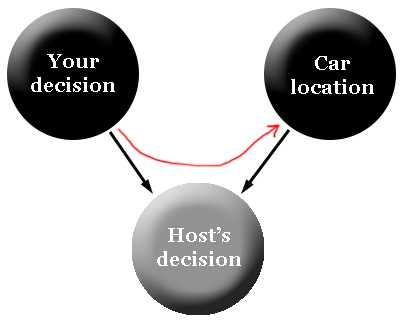

Kant’s argument for this is that causality is a necessary condition for the judgment that events occur in a temporal order. He starts by observing that we don’t directly perceive time. For instance, we never have a perception of one event being before another, we just perceive one and, separately, the other. So to conclude that the first preceded the second requires something beyond perception, that is, a concept connecting them.

He argues that this connection must be necessary: “For this objective relation to be cognized as determinate, the relation between the two states must be thought as being such that it determines as necessary which of the states must be placed before and which after.” And as we’ve seen, the only way to get a necessary connection between perceptions is through a pure concept. The required pure concept is the relation of cause and effect: “the cause is what determines the effect in time, and determines it as the consequence.” So starting from the observation that we judge events to occur in a temporal order, Kant concludes that we must have a pure concept of cause and effect.

What about particular causal rules, like that striking a match produces a flame? Such rules are not derived solely from experience, but also from the pure concept of causality, on which their existence depends. It is the presence of the pure concept that allows the inference of these particular rules from experience, even though they postulate a necessary connection.

Now we can see how different Kant and Hume’s conceptions of causality are. While Hume thought that the traditional concept of causality as a necessary connection was unrescuable and extraneous to our perceptions, Kant sees it as a bedrock principle of experience that is necessary for us to be able to make sense of our perceptions at all. Kant rejects Hume’s definition of cause in terms of constant conjunction on the grounds that it “cannot be reconciled with the scientific a priori cognitions that we actually have.”

Despite this great gulf between the two philosophers’ conceptions of causality, there are some similarities. As we saw above, Kant agrees wholeheartedly with Hume that perception alone is insufficient for concluding that there is a necessary connection between events. He also agrees that a purely analytic approach is insufficient. Since Kant sees pure intuitions and pure concepts as features of the mind, not the external world, both philosophers deny that causation is an objective relationship between things in themselves (as opposed to perceptions of things). Of course, Kant would deny that this makes causality an illusion, just as he denied that space and time are made illusory by his philosophy.

Of course, it’s impossible to know to what extent the two philosophers would have actually agreed, had Hume been able to read Kant’s responses to his works. Would he have been convinced that synthetic a priori judgments really exist? If so, would he accept Kant’s pure intuitions and pure concepts? I suspect that at the crux of their disagreement would be Kant’s claim that math is synthetic a priori. While Hume never explicitly proclaims math’s analyticity (he didn’t have the term, after all), it seems more in line with his views on algebra and arithmetic as purely concerning the way that ideas relate to one another. It is also more in line with the axiomatic approach to mathematics familiar to Hume, in which one defines a set of axioms from which all truths about the mathematical concepts involved necessarily follow.

If Hume did maintain math’s analyticity, then Kant’s arguments about the importance of synthetic a priori knowledge would probably hold much less sway for him, and would largely amount to an appeal to the validity of metaphysical knowledge, which Hume already doubted. Hume also would likely want to resist Kant’s idealism; in Section XII of the Enquiry he mocks philosophers that doubt the connection between the objects of our senses and external objects, saying that if you “deprive matter of all its intelligible qualities, both primary and secondary, you in a manner annihilate it and leave only a certain unknown, inexplicable something as the cause of our perceptions – a notion so imperfect that no skeptic will think it worthwhile to contend against it.”