Epistemic status: This is a line of thought that I’m not fully on board with, but have been taking more seriously recently. I wouldn’t be surprised if I object to all of this down the line.

The question of whether or not a given thing exists is not an empty question or a question of mere semantics. It is a question which you can get empirical evidence for, and a question whose answer affects what you expect to observe in the world.

Before explaining this further, I want to draw an analogy between ontology and causation (and my attitudes towards them).

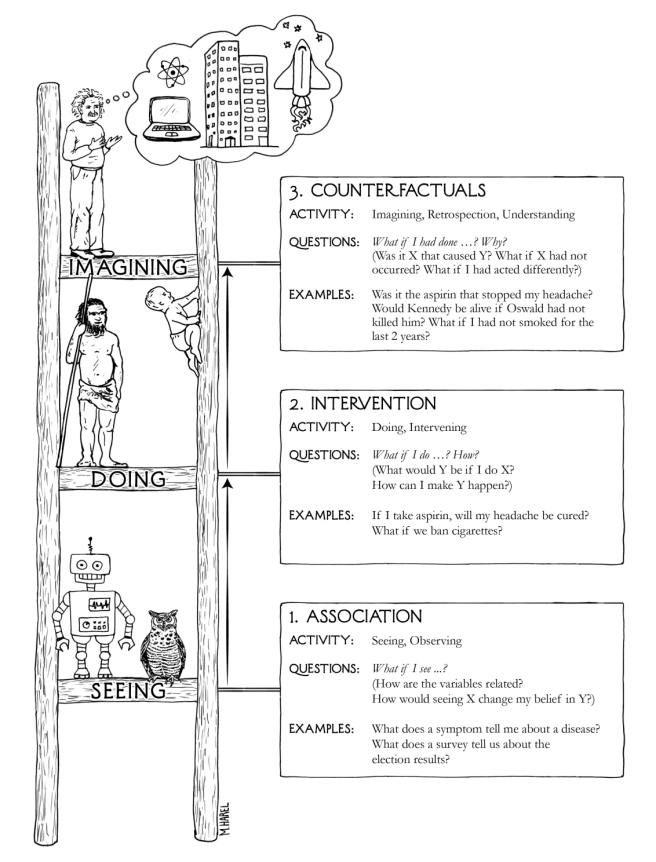

Early in my philosophical education, my attitude towards causality was sympathetic to the Humean-style eliminativism, in which causality is a useful construct that isn’t reflected in the fundamental structure of the world. That is, I quickly ‘tossed out’ the notion of causality, comfortable to just talk about the empirical regularities governed by our laws of physics.

Later, upon encountering some statisticians that exposed me to the way that causality is actually calculated in the real world, I began to feel that I had been overly hasty. In fact, it turns out that there is a perfectly rigorous and epistemically accessible formalization of causality, and I now feel that there is no need to toss it out after all.

Here’s an easy way of thinking about this: While the slogan “Correlation does not imply causality” is certainly true, the reverse (“Causality does not imply correlation”) is trickier. In fact, whenever you have a causal relationship between variables, you do end up expecting some observable correlations. So while you cannot deductively conclude a causal relationship from a merely correlational one, you can certainly get evidence for some causal models.

This is just a peek into the world of statistical discovery of causal relationships – going further requires a lot more work. But that’s not necessary for my aim here. I just want to express the following parallel:

Rather than trying to set up a perfect set of necessary and sufficient conditions for application of the term ’cause’, we can just take a basic axiom that any account of causation must adhere to. Namely: Where there’s causation, there’s correlation.

And rather than trying to set up a perfect set of necessary and sufficient conditions for the term ‘existence’, we can just take a basic axiom that any account of existence must adhere to. Namely: If something affects the world, it exists.

This should seem trivially obvious. While there could conceivably be entities that exist without affecting anything, clearly any entity that has a causal impact on the world must exist.

The contrapositive of this axiom is that if something doesn’t exist, it does not affect the world.

Again, this is not a controversial statement. And importantly, it makes ontology amenable to scientific inquiry! Why? Because two worlds with different ontologies will have different repertoires of causes and effects. A world in which nothing exists is a world in which nothing affects anything – a dead, static region of nothingness. We can rule out this world on the basis of our basic empirical observation that stuff is happening.

This short argument attempts to show that ontology is a scientifically respectable concept, and not merely a matter of linguistic game-playing. Scientific theories implicitly assume particular ontologies by relying upon laws of nature which reference objects with causal powers. Fundamentally, evidence that reveals the impotence of these supposed causal powers serves as evidence against the ontological framework of such theories.

I think the temptation to wave off ontological questions as somehow disreputable and unscientific actually springs from the fundamentality of this concept. Ontology isn’t a minor add-on to our scientific theories done to appease the philosophers. Instead, it is built in from the ground floor. We can’t do science without implicitly making ontological assumptions. I think it’s better to make these assumptions explicit and debate about the fundamental principles by which we justify them, then it is to do it invisibly, without further analysis.