Credit to Joel David Hamkins, who I heard discussing this paradox on an episode of the podcast My Favorite Theorem.

Define a finite game to be any two-player turn-based game such that every possible playthrough ends after finitely many turns. For example, tic-tac-toe is a finite game because every game ends in at most nine turns.. So is chess with the 50-move-rule enforced (if 50 moves are taken without any pawn advances or captures, then the game ends in a draw). In both these examples, there’s an upper bound to how long the game can last, but this is not required. A game of transfinite Nim would count as finite; every game lasts for only finitely many turns, even though there is no upper bound on the number of turns it takes.

Now, consider the game Hypergame. To play Hypergame, Player 1 begins by choosing any finite game G. Then Player 2 plays the first move of G, Player 1 plays the second move of G, and so on until the game is completed. (Since Player 1 chose a finite game, this will always happen after some finite amount of time.)

Is Hypergame a finite game? Yes, we can easily see that it must be. Whatever game Player 1 chooses will be over after n steps, for some finite n. So that playthrough of Hypergame will have taken n+1 steps.

But if Hypergame is a finite game, then it is a valid choice for the first move of Hypergame! So we can now imagine the following playthrough of Hypergame:

A Troubling Playthrough of Hypergame

Player 1: For the finite game that we shall play, I pick Hypergame.

Player 2: Hm, okay. So now I’m playing the first move of Hypergame. So I must now choose any finite game. I’ll choose Hypergame!

Player 1: Alright so I’m again playing the first move of this new game of Hypergame. I’ll choose Hypergame again.

Player 2: And I choose Hypergame again as well.

So on forever…

At no point does either player violate the rules of Hypergame. And yet, we ended up with an infinite playthrough of Hypergame, which we proved was impossible! So we have a contradiction. What is the resolution?

✵✵✵

Here’s one possible resolution, analogous to the resolutions of similar set-theoretic paradoxes.

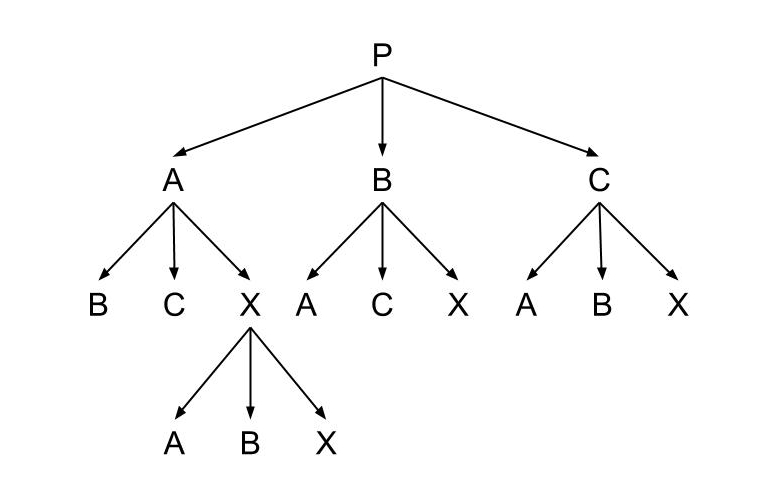

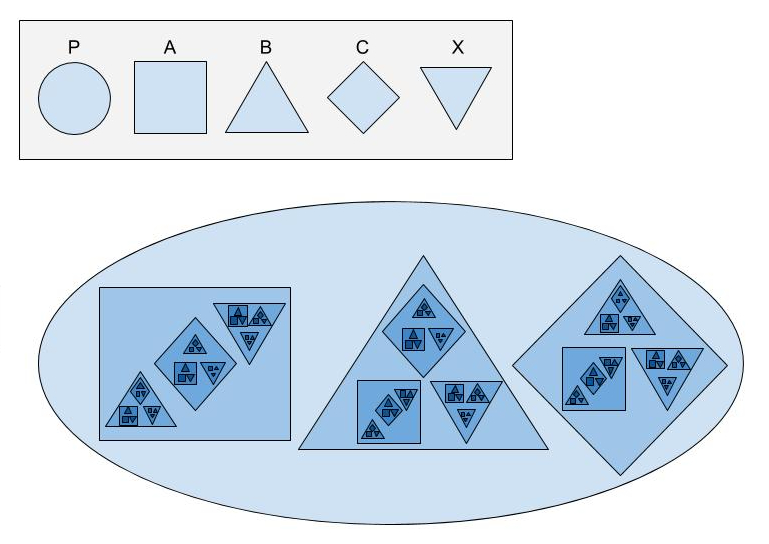

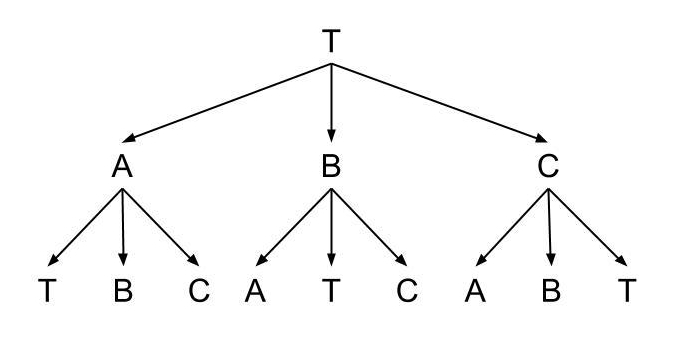

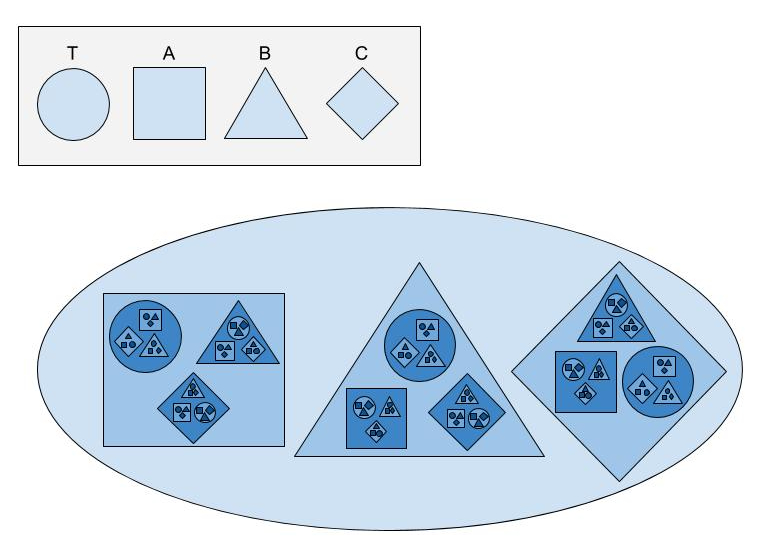

We can think about a game as a directed rooted tree. The vertices of the tree correspond to game states, and the edges correspond to the allowed moves. The root of the tree corresponds to the starting game state, and Player 1 gets to choose which edge to travel along first. From the new vertex, Player 2 decides the next edge to travel along. And so on. The tree’s leaves correspond to ending states of the game, and each leaf is labelled according to which player won in that ending state.

In this framework, what is a finite game? As I defined it above, a finite game is just any directed rooted tree such that every path starting at the root ends at a leaf after passing through finitely many edges. This corresponds perfectly to the idea that every possible playthrough of the game takes only finitely many turns. Notice that a finite game is not necessarily a finite tree! The game tree of a finite game is only finite if it’s also finitely branching. In other words, for a game to have a finite tree requires not just that every playthrough is finitely long, but that each player always has only finitely many choices on their turn.

For instance, the game tree of Hypergame is not a finite tree, because Player 1 has infinitely many possible finite games to choose from on his first turn. How big exactly is the game tree of Hypergame? We know that we have to have a vertex corresponding to the start of any finite game, so it must be at least as large as the set of all finite games. But how large is this set?

This is where we run into problems. The game tree of a finite game can be arbitrarily large. Consider the game which starts by Player 1 choosing any real number and then immediately losing. The height of the game tree is 1, but its width is the cardinality of the continuum. Similarly for any set X we can find a game whose tree has cardinality |X|. This means that there are finite games of arbitrary cardinalities. But then as a corollary to the nonexistence of a largest cardinality, we know that there is no set of all finite games! And this implies that Hypergame has no game tree! More precisely, there is no set corresponding to the game tree of Hypergame as we defined it.

Couldn’t we instead think about Hypergame as a proper class? Sure! But then when we choose a finite game in our first move, we couldn’t be picking Hypergame, as the property “is a finite game” would only apply to sets and not proper classes. This means that we can’t actually select Hypergame as our first move! And so we avoid the paradoxical conclusion that we can keep picking Hypergame ad infinitum.

I find it quite fascinating that the seemingly innocent notion of a finite game can lead us into paradoxes involving proper classes!