- The tallest man in the world is tall.

- If somebody is one nanometer shorter than a tall person, then they are themselves tall.

If the word tall is to mean anything, then it must imply at least these two premises. But from the two it follows by mathematical induction that a two-foot infant is tall, that a one-inch bug is tall, and worst, that a zero-inch tall person is tall. Why? If the tallest man is the world is tall (let’s name him Fred), then he would still be tall if he was shrunk by a single nanometer. We can call this new person ‘Fred – 1 nm’. And since ‘Fred – 1 nm’ is tall, so is ‘Fred – 2 nm’. And then so is ‘Fred – 3 nm’. Et cetera until absurdity ensues.

So what went wrong? Surely the first premise can’t be wrong – who could the word apply to if not the tallest man in the world?

The second seems to be the only candidate for denial. But this should make us deeply uneasy; the implication of such a denial is that there is a one-nanometer wide range of heights, during which somebody makes the transition from being completely not tall to being completely tall. Somebody exactly at this line could be wavering back and forth between being tall and not every time a cell dies or divides, and every time a tiny draft rearranges the tips of their hairs.

Let’s be clear just how tiny a nanometer really is: A sheet of paper is about a hundred thousand nanometers thick. That’s more than the number of inches that make up a mile. If the word ‘tall’ means anything at all, this height difference just can’t make a difference in our evaluation of tallness.

So we are led to the conclusion: Fred is not tall. And if the tallest man on the planet isn’t tall, then nobody is tall. Our concept of tallness is just a useful idea that falls apart on any close examination.

This is the infamous Sorites paradox. What else is vulnerable to versions of the Sorites paradox? Almost every concept that we use in our day to day life! Adulthood, intelligence, obesity, being cold, personhood, wealthiness, and on and on. It’s harder to look for concepts that aren’t affected than those that are!

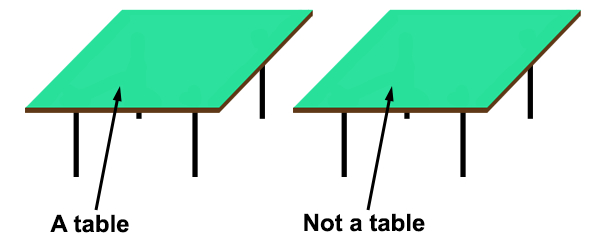

The Sorites paradox is usually seen in discussions of properties, but it can equally well be applied to discussions of objects. This application leads us to a view of the world that differs wildly from our common sense view. Let’s take a standard philosophical case study: the table. What is it for something to be a table? What changes to a table make it no longer a table?

Whatever answers these questions about tables have, they will hopefully embody our common sense notions about tables and allow us to make the statements that we ordinarily want to make about tables. One such common sense notion involves what it takes for a table to cease being a table; presumably little changes in the table are allowed, while big changes (cleaving it into small pieces) are not. But here we run into the problem of vagueness.

If X is a table, then X would still be a table if it lost a tiny bit of the matter constituting it. Like before, we’ll take this to the extreme to maximize its intuitive plausibility: If a single atom is shed from a table, it’s still a table. Denial of this is even worse than it was before; if changes by single atoms could change table-hood, we would be in a position where we should be constantly skeptical of whether objects are tables, given the microscopic changes that are happening to ordinary tables all the time.

And so we are led inevitably to the conclusion that single atoms are tables, and even that empty space is a table. (Iteratively remove single atoms from a table until it has become arbitrarily small.) Either that, or there are no tables. I take this second option to be preferable.

How far do these arguments reach? It seems like most or all macroscopic objects are vulnerable to them. After all, we don’t change our view of macroscopic objects that undergo arbitrarily small losses of constituent material. And this leads us to a worldview in which the things that actually exist match up with almost none of the things that our common-sense intuitions tell us exist: tables, buildings, trees, planets, computers, people, and so on.

But is everything eliminated? Plausibly not. What can be said about a single electron, for instance, that would lead to a continuity premise? Probably nothing; electrons are defined by a set of intrinsic properties, none of which can differ to any degree while the particle still remains an electron. In general, all of the microscopic entities that are thought to fundamentally compose everything else in our macroscopic world will be (seemingly) invulnerable to attack by a version of the Sorites paradox.

The conclusion is that some form of eliminativism is true (objects don’t exist, but their lowest-level constituents do). I think that this is actually the right way to look at the world, and is supported by a host of other considerations besides those in this post.

Closing comments

- The subjectivity of ‘tall’ doesn’t remove the paradox. What’s in question isn’t the agreement between multiple people about what tall means, but the coherency of the concept as used by a single person. If a single person agrees that Fred is tall, and that arbitrarily small height differences can’t make somebody go from not tall to tall, then they are led straight into the paradox.

- The most common response to this puzzle I’ve noticed is just to balk and laugh it off as absurd, while not actually addressing the argument. Yes, the conclusion is absurd, which is exactly why the paradox is powerful! If you can resolve the paradox and erase the absurdity, you’ll be doing more than 2000 years of philosophers and mathematicians have been able to do!