This is the third part in a three-part series on the foundations of statistical mechanics.

- The Necessity of Statistical Mechanics for Getting Macro From Micro

- Is The Fundamental Postulate of Statistical Mechanics A Priori?

- The Central Paradox of Statistical Mechanics: The Problem of The Past

— — —

What I’ve argued for so far is the following set of claims:

- To successfully predict the behavior of macroscopic systems, we need something above and beyond the microphysical laws.

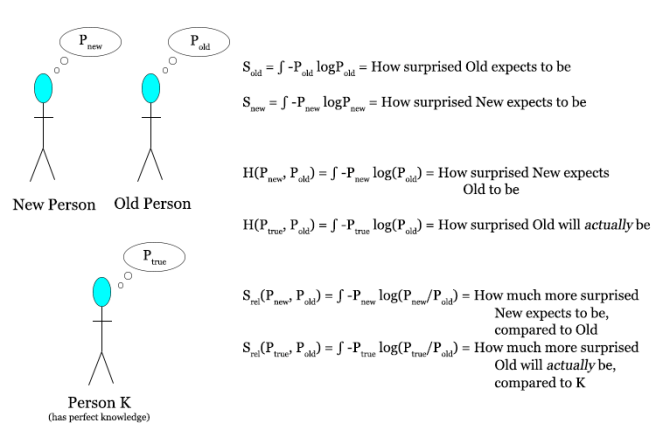

- This extra thing we need is the fundamental postulate of statistical mechanics, which assigns a uniform distribution over the region of phase space consistent with what you know about the system. This postulate allows us to prove all the things we want to say about the future, such as “gases expand”, “ice cubes melt”, “people age” and so on.

- This fundamental postulate is not justifiable on a priori grounds, as it is fundamentally an empirical claim about how frequently different micro states pop up in our universe. Different initial conditions give rise to different such frequencies, so that a claim to a priori access to the fundamental postulate is a claim to a priori access to the precise details of the initial condition of the universe.

There’s just one problem with all this… apply our postulate to the past, and everything breaks.

Notice that I said that the fundamental postulate allows us to prove all the things we want to say about the future. That wording was chosen carefully. What happens if you try to apply the microphysical laws + the fundamental postulate to predict the past of some macroscopic system? It turns out that all hell breaks loose. Gases spontaneously contract, ice cubes form from puddles of water, and brains pop out of thermal equilibrium.

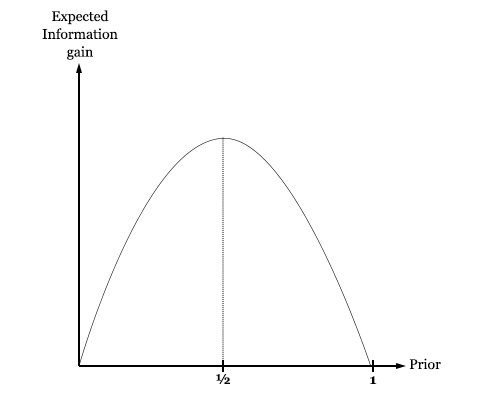

Why does this happen? Very simply, we start with two fully time reversible premises (the microphysical laws and the fundamental postulate). We apply it to present knowledge of some state, the description of which does not specify a special time direction. So any conclusion we get must as a matter of logic be time reversible as well! You can’t start with premises that treat the past as the mirror image of the future, and using just the rules of logical equivalence derive a conclusion that treats the past as fundamentally different from the future. And what this means is that if you conclude that entropy increases towards the future, then you must also conclude that entropy increases towards the past. Which is to say that we came from a higher entropy state, and ultimately (over a long enough time scale and insofar as you think that our universe is headed to thermal equilibrium) from thermal equilibrium.

Let’s flesh this argument out a little more. Consider a half-melted ice cube sitting in the sun. The microphysical laws + the fundamental postulate tell us that the region of phase space consisting of states in which the ice cube is entirely melted is much much much larger than the region of phase space in which it is fully unmelted. So much larger, in fact, that it’s hard to express using ordinary English words. This is why we conclude that any trajectory through phase space that passes through the present state of the system (the half-melted cube) is almost certainly going to quickly move towards the regions of phase space in which the cube is fully melted. But for the exact same reason, if we look at the set of trajectories that pass through the present state of the system, the vast vast vast majority of them will have come from the fully-melted regions of phase space. And what this means is that the inevitable result of our calculation of the ice cube’s history will be that a few moments ago it was a puddle of water, and then it spontaneously solidified and formed into a half-melted ice cube.

This argument generalizes! What’s the most likely past history of you, according to statistical mechanics? It’s not that the solar system coalesced from a haze of gases strewn through space by a past supernova, such that a planet would form in the Goldilocks zone and develop life, which would then gradually evolve through natural selection to the point where you are sitting in whatever room you’re sitting in reading this post. This trajectory through phase space is enormously unlikely. The much much much more likely past trajectory of you through phase space is that a little while ago you were a bunch of particles dispersed through a universe at thermal equilibrium, which happened to spontaneously coalesce into a brain that has time to register a few moments of experience before dissipating back into chaos. “What about all of my memories of the past?” you say. As it happens the most likely explanation of these memories is not that they are veridical copies of real happenings in the universe but illusions, manufactured from randomness.

Basically, if you buy everything I’ve argued in the first two parts, then you are forced to conclude that the universe is most likely near thermal equilibrium, with your current experience of it arising as a spontaneous dip in entropy, just enough to produce a conscious brain but no more. There are at least two big problems with this view.

Problem 1: This conclusion is, we think, extremely empirically wrong! The ice cube in front of you didn’t spontaneously form from a puddle of water, uncracked eggs weren’t a moment ago scrambled, and your memories are to some degree veridical. If you really believe that you are merely a spontaneous dip in entropy, then your prediction for the next minute will be the gradual dissolution of your brain and loss of consciousness. Now, wait a minute and see if this happens. Still here? Good!

Problem 2: The conclusion cannot be simultaneously believed and justified. If you think that you’re a thermal fluctuation, then you shouldn’t credit any of your memories as telling you anything about the world. But then your whole justification to coming to the conclusion in the first place (the experiments that led us to conclude that physics is time-reversible and that the fundamental postulate is true) is undermined! Either you believe it without justification, or you don’t believe despite justification. Said another way, no reflective equilibrium exists at an entropy minimum. David Albert calls this peculiar epistemic state cognitively unstable, as it’s not clear where exactly it should leave you.

Reflect for a moment on how strange of a situation we are in here. Starting from very basic observations of the world, involving its time-reversibility on the micro scale and the increase in entropy of systems, we see that we are inevitably led to the conclusion that we are almost certainly thermal fluctuations, brains popping out of the void. I promise you that no trick has been pulled here, this really is the state of the philosophy of statistical mechanics! The big issue is how to deal with this strange situation.

One approach is to say the following: Our problem is that our predictions work towards the future but not the past. So suppose that we simply add as a new fundamental postulate the proposition that long long ago the universe had an incredibly low entropy. That is, suppose that instead of just starting with the microphysical laws and the fundamental postulate of statistical mechanics, we added a third claims: the Past Hypothesis.

The Past Hypothesis should be understood as an augmentation of our Fundamental Postulate. Taken together, the two postulates say that our probability distribution over possible microstates should not be uniform over phase space. Instead, it should be what you get when you take the uniform distribution, and then condition on the distant past being extremely low entropy. This process of conditioning clearly preferences one direction of time over the other, and so the symmetry is broken.

It’s worth reflecting for a moment on the strangeness of the epistemic status of the Past Hypothesis. It happens that we have over time accumulated a ton of observational evidence for the occurrence of the Big Bang. But none of this evidence has anything to do with our reasons for accepting the Past Hypothesis. If we buy the whole line of argument so far, our conclusion that something like a Big Bang occurred becomes something that we are forced to believe for deep logical reasons, on pain of cognitive instability and self-undermining belief. Anybody that denies that the Big Bang (or some similar enormously low-entropy past state) occurred has to contend with their view collapsing in self-contradiction upon observing the physical laws!