Today we’re going to talk about a topic that’s very close to my heart: entropy. We’ll start somewhere that might seem unrelated: surprise.

Suppose that we wanted to quantify the intuitive notion of surprise. How should we do that?

We’ll start by analyzing a few base cases.

First! If something happens and you already were completely certain that it would happen, then you should completely unsurprised.

That is, if event E happens, and you had a credence P(E) = 100% in it happening, then your surprise S should be zero.

S(1) = 0

Second! If something happens that you were totally sure was impossible, with 100% credence, then you should be infinitely surprised.

That is, if E happens and P(E) = 0, then S = ∞.

S(0) = ∞

So far, it looks like your surprise S should be a function of your credence P in the event you are surprised at. That is, S = S(P). We also have the constraints that S(1) = 0 and S(0) = ∞.

There are many candidates for a function like this, for example: S(P) = 1/P – 1, S(P) = -log(P), S(P) = cot(πx/2). So we need more constraints.

Third! If an event E1 happens that is surprising to degree S1, and then another event E2 happens with surprisingness S2, then your surprise at the combination of these events should be S1 + S2.

I.e., we want surprise to be additive. If S(P(E1)) = S1 and S(P(E2 | E1)) = S2, then S(P(E1 & E2) = S1 + S2.

This entails a new constraint on our surprise function, namely:

S(PQ) = S(P) + S(Q)

Fourth, and finally! We want our surprise function to be continuous – free from discontinuous jumps. If your credence that the event will happen changes by an arbitrarily small amount, then your surprise if it does happen should also change by an arbitrarily small amount.

S(P) is continuous.

These four constraints now fully specify the form of our surprise function, up to a multiplicative constant. What we find is that the only function satisfying these constraints is the logarithm:

S(P) = k logP, where k is some negative number

Taking the simplest choice of k, we end up with a unique formalization of the intuitive notion of surprise:

S(P) = – logP

To summarize what we have so far: Four basic desideratum for our formalization of the intuitive notion of surprise have led us to a single simple equation.

This equation that we’ve arrived at turns out to be extremely important in information theory. It is, in fact, just the definition of the amount of information you gain by observing E. This reveals to us a deep connection between surprise and information. They are in an important sense expressing the same basic idea: more surprising events give you more information, and unsurprising events give you little information.

Let’s get a little better numerical sense of this formalization of surprise/information. What does a single unit of surprise or information mean? With some quick calculation, we see that a single unit of surprise, or bit of information corresponds to the observation of an event that you had a 50% expectation of. This also corresponds to a ruling out of 50% of the weight of possible other events you thought you might have observed. In essence, each bit of information you receive / surprise you experience corresponds to the total amount of possibilities being cut in half.

Two bits of information narrow the possibilities to one-fourth. Three cut out all but one-eighth. And so on. For a rational agent, the process of receiving more information or of being continuously surprised is the process of whittling down your models of reality to a smaller and better set!

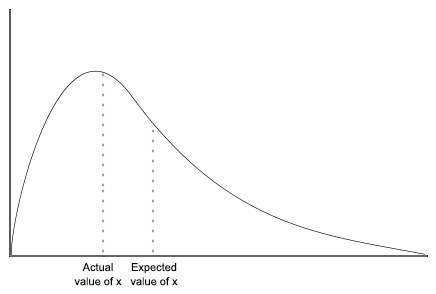

The next great step forward is to use our formalization of surprise to talk not just about how surprised you are once an event happens, but how surprised you expect to be. If you have a credence of P in an event happening, then you expect a degree of surprise S(P) with credence P. In other words, the expected surprise you have with respect to that particular event is:

Expected surprise = – P logP

When summed over the totality of all possible events that occurred we get the following expression:

Total expected surprise = – ∑i Pi logPi

This expression should look very very familiar to you. It’s one of the most important quantities humans have discovered…

ENTROPY!!

Now you understand the title of this post. Quite literally, entropy is total expected surprise!

Entropy = Total expected surprise

By the way, you might be wondering if this is the same entropy as you hear mentioned in the context of physics (that thing that always increases). Yes, it is identical! This means that we can describe the Second Law of Thermodynamics as a conspiracy by the universe to always be as surprising as possible to us! There are a bunch of ways to explore the exact implications of this, but that’s a subject for another post.

Getting back to the subject of this post, we can now make another connection. Surprise is information. Total expected surprise is entropy. And entropy is a measure of uncertainty.

If you think about this for a moment, this should start to make sense. If your model of reality is one in which you expect to be very surprised in the next moment, then you are very uncertain about what is going to happen in the next moment. If, on the other hand, your model of reality is one in which you expect zero surprise in the next moment, then you are completely certain!

Thus we see the beautiful and deep connection between surprise, information, entropy, and uncertainty. The overlap of these four concepts is rich with potential for exploration. We could go the route of model selection and discuss notions like mutual information, information divergence, and relative entropy, and how they relate to the virtues of predictive accuracy and model simplicity. We could also go the route of epistemology and discuss the notion of epistemic humility, choosing your beliefs to maximize your uncertainty, and the connection to Bayesian epistemology. Or, most tantalizingly, we could go the route of physics and explore the connection between this highly subjective sense of entropy as surprise/ uncertainty, and the very concrete notion of entropy as a physical quantity that characterizes the thermal properties of systems.

Instead of doing any of these, I’ll do none, and end here in hope that I’ve conveyed some of the coolness of this intersection of philosophy, statistics, and information theory.