There are lots of things that I don’t know, like, say, what the birth rate in Sweden is or what the effect of poverty on IQ is. There are also lots of things that I find really confusing and hard to understand, like quantum field theory and monetary policy. There’s also a special category of things that I find conceptually puzzling. These things aren’t difficult to grasp because the facts about them are difficult to understand or require learning complicated jargon. Instead, they’re difficult to grasp because I suspect that I’m confused about the concepts in use.

This is a much deeper level of confusion. It can’t be adjudicated by just reading lots of facts about the subject matter. It requires philosophical reflection on the nature of these concepts, which can sometimes leave me totally confused about everything and grasping for the solid ground of mere factual ignorance.

As such, it feels like a big deal when something I’ve been conceptually puzzled about becomes clear. I want to compile a list for future reference of things that I’m currently conceptually puzzled about and things that I’ve become un-puzzled about. (This is not a complete list, but I believe it touches on the major themes.)

Things I’m conceptually puzzled about

What is the relationship between consciousness and physics?

I’ve written about this here.

Essentially, at this point every available viewpoint on consciousness seems wrong to me.

Eliminativism amounts to a denial of pretty much the only thing that we can be sure can’t be denied – that we are having conscious experiences. Physicalism entails the claim that facts about conscious experience can be derived from laws of physics, which is wrong as a matter of logic.

Dualism entails that the laws of physics by themselves cannot account for the behavior of the matter in our brains, which is wrong. And epiphenomenalism entails that our beliefs about our own conscious experience are almost certainly wrong, and are no better representations of our actual conscious experiences than random chance.

How do we make sense of decision theory if we deny libertarian free will?

Written about this here and here.

Decision theory is ultimately about finding the decision D that maximizes expected utility EU(D). But to do this calculation, we have to decide what the set of possible decisions we are searching is.

Make this set too large, and you end up getting fantastical and impossible results (like that the optimal decision is to snap your fingers and make the world into a utopia). Make it too small, and you end up getting underwhelming results (in the extreme case, you just get that the optimal decision is to do exactly what you are going to do, since this is the only thing you can do in a strictly deterministic world).

We want to find a nice middle ground between these two – a boundary where we can say “inside here the things that are actually possible for us to do, and outside are those that are not.” But any principled distinction between what’s in the set and what’s not must be based on some conception of some actions being “truly possible” to us, and others being truly impossible. I don’t know how to make this distinction in the absence of a robust conception of libertarian free will.

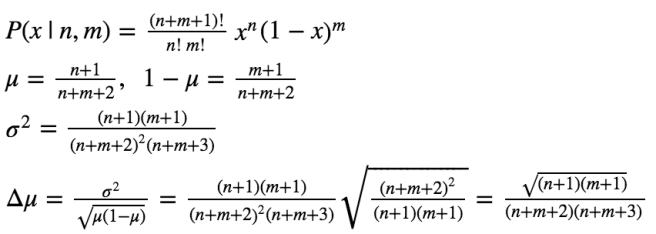

Are there objectively right choices of priors?

I’ve written about this here.

If you say no, then there are no objectively right answers to questions like “What should I believe given the evidence I have?” And if you say yes, then you have to deal with thought experiments like the cube problem, where any choice of priors looks arbitrary and unjustifiable.

(If you are going to be handed a cube, and all you know is that it has a volume less than 1 cm3, then setting maximum entropy priors over volumes gives different answers than setting maximum entropy priors over side areas or side lengths. This means that what qualifies as “maximally uncertain” depends on whether we frame our reasoning in terms of side length, areas, or cube volume. Other approaches besides MaxEnt have similar problems of concept dependence.)

How should we deal with infinities in decision theory?

I wrote about this here, here, here, and here.

The basic problem is that expected utility theory does great at delivering reasonable answers when the rewards are finite, but becomes wacky when the rewards become infinite. There are a huge amount of examples of this. For instance, in the St. Petersburg paradox, you are given the option to play a game with an infinite expected payout, suggesting that you should buy in to the game no matter how high the cost. You end up making obviously irrational choices, such as spending $1,000,000 on the hope that a fair coin will land heads 20 times in a row. Variants of this involve the inability of EU theory to distinguish between obviously better and worse bets that have infinite expected value.

And Pascal’s mugging is an even worse case. Roughly speaking, a person comes up to you and threatens you with infinite torture if you don’t submit to them and give them 20 dollars. Now, the probability that this threat is credible is surely tiny. But it is non-zero! (as long as you don’t think it is literally logically impossible for this threat to come true)

An infinite penalty times a finite probability is still an infinite expected penalty. So we stand to gain an infinite expected utility by just handing over the 20 dollars. This seems ridiculous, but I don’t know any reasonable formalization of decision theory that allows me to refute it.

Is causality fundamental?

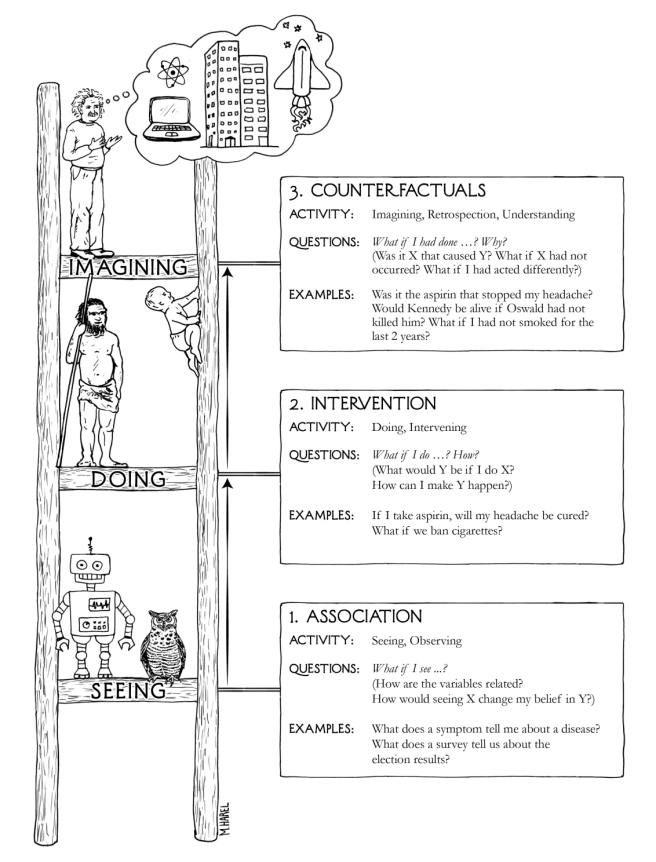

Causality has been nicely formalized by Pearl’s probabilistic graphical models. This is a simple extension of probability theory, out of which naturally falls causality and counterfactuals.

One can use this framework to represent the states of fundamental particles and how they change over time and interact with one another. What I’m confused about is that in some ways of looking at it, the causal relations appear to be useful but un-fundamental constructs for the sake of easing calculations. In other ways of looking at it, causal relations are necessarily built into the structure of the world, and we can go out and empirically discover them. I don’t know which is right. (Sorry for the vagueness in this one – it’s confusing enough to me that I have trouble even precisely phrasing the dilemma).

How should we deal with the apparent dependence of inductive reasoning upon our choices of concepts?

I’ve written about this here. Beyond just the problem of concept-dependence in our choices of priors, there’s also the problem presented by the grue/bleen thought experiment.

This thought experiment proposes two new concepts: grue (= the set of things that are either green before 2100 or blue after 2100) and bleen (the inverse of grue). It then shows that if we reasoned in terms of grue and bleen, standard induction would have us concluding that all emeralds will suddenly turn blue after 2100. (We repeatedly observed them being grue before 2100, so we should conclude that they will be grue after 2100.)

In other words, choose the wrong concepts and induction breaks down. This is really disturbing – choices of concepts should be merely pragmatic matters! They shouldn’t function as fatal epistemic handicaps. And given that they appear to, we need to develop some criterion we can use to determine what concepts are good and what concepts are bad.

The trouble with this is that the only proposals I’ve seen for such a criterion reference the idea of concepts that “carve reality at its joints”; in other words, the world is composed of green and blue things, not grue and bleen things, so we should use the former rather than the latter. But this relies on the outcome of our inductive process to draw conclusions about the starting step on which this outcome depends!

I don’t know how to cash out “good choices of concepts” without ultimately reasoning circularly. I also don’t even know how to make sense of the idea of concepts being better or worse for more than merely pragmatic reasons.

How should we reason about self defeating beliefs?

The classic self-defeating belief is “This statement is a lie.” If you believe it, then you are compelled to disbelieve it, eliminating the need to believe it in the first place. Broadly speaking, self-defeating beliefs are those that undermine the justifications for belief in them.

Here’s an example that might actually apply in the real world: Black holes glow. The process of emission is known as Hawking radiation. In principle, any configuration of particles with a mass less than the black hole can be emitted from it. Larger configurations are less likely to be emitted, but even configurations such as a human brain have a non-zero probability of being emitted. Henceforth, we will call such configurations black hole brains.

Now, imagine discovering some cosmological evidence that the era in which life can naturally arise on planets circling stars is finite, and that after this era there will be an infinite stretch of time during which all that exists are black holes and their radiation. In such a universe, the expected number of black hole brains produced is infinite (a tiny finite probability multiplied by an infinite stretch of time), while the expected number of “ordinary” brains produced is finite (assuming a finite spatial extent as well).

What this means is that discovering this cosmological evidence should give you an extremely strong boost in credence that you are a black hole brain. (Simply because most brains in your exact situation are black hole brains.) But most black hole brains have completely unreliable beliefs about their environment! They are produced by a stochastic process which cares nothing for producing brains with reliable beliefs. So if you believe that you are a black hole brain, then you should suddenly doubt all of your experiences and beliefs. In particular, you have no reason to think that the cosmological evidence you received was veridical at all!

I don’t know how to deal with this. It seems perfectly possible to find evidence for a scenario that suggests that we are black hole brains (I’d say that we have already found such evidence, multiple times). But then it seems we have no way to rationally respond to this evidence! In fact, if we do a naive application of Bayes’ theorem here, we find that the probability of receiving any evidence in support of black hole brains to be 0!

So we have a few options. First, we could rule out any possible skeptical scenarios like black hole brains, as well as anything that could provide any amount of evidence for them (no matter how tiny). Or we could accept the possibility of such scenarios but face paralysis upon actually encountering evidence for them! Both of these seem clearly wrong, but I don’t know what else to do.

How should we reason about our own existence and indexical statements in general?

This is called anthropic reasoning. I haven’t written about it on this blog, but expect future posts on it.

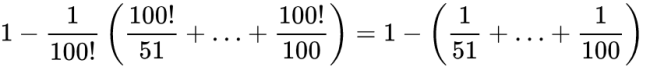

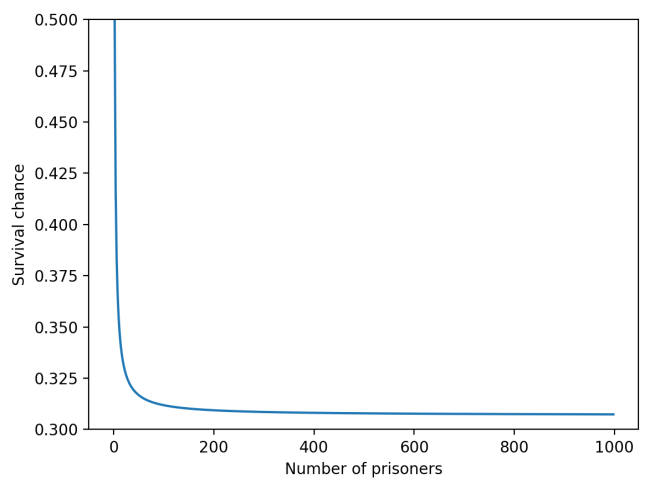

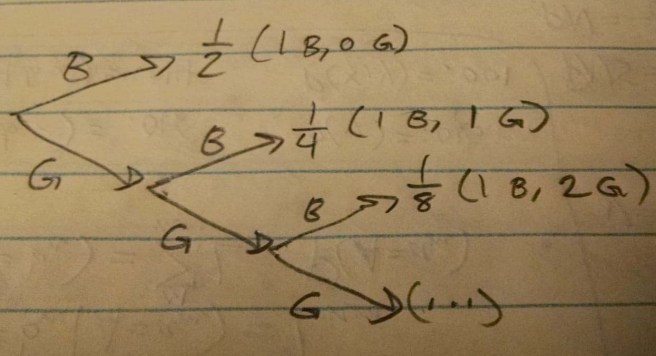

A thought experiment: imagine a murderous psychopath who has decided to go on an unusual rampage. He will start by abducting one random person. He rolls a pair of dice, and kills the person if they land snake eyes (1, 1). If not, he lets them free and hunts down ten new people. Once again, he rolls his pair of die. If he gets snake eyes he kills all ten. Otherwise he frees them and kidnaps 100 new people. On and on until he eventually gets snake eyes, at which point his murder spree ends.

Now, you wake up and find that you have been abducted. You don’t know how many others have been abducted alongside you. The murderer is about to roll the dice. What is your chance of survival?

Your first thought might be that your chance of death is just the chance of both dice landing 1: 1/36. But think instead about the proportion of all people that are ever abducted by him that end up dying. This value ends up being roughly 90%! So once you condition upon the information that you have been captured, you end up being much more worried about your survival chance.

But at the same time, it seems really wrong to be watching the two dice tumble and internally thinking that there is a 90% chance that they land snake eyes. It’s as if you’re imagining that there’s some weird anthropic “force” pushing the dice towards snake eyes. There’s way more to say about this, but I’ll leave it for future posts.

Things I’ve become un-puzzled about

Newcomb’s problem – one box or two box?

To almost everyone, it is perfectly clear and obvious what should be done. The difficulty is that these people seem to divide almost evenly on the problem, with large numbers thinking that the opposing half is just being silly.

– Nozick, 1969

I’ve spent months and months being hopelessly puzzled about Newcomb’s problem. I now am convinced that there’s an unambiguous right answer, which is to take the one box. I wrote up a dialogue here explaining the justification for this choice.

In a few words, you should one-box because one-boxing makes it nearly certain that the simulation of you run by the predictor also one-boxed, thus making it nearly certain that you will get 1 million dollars. The dependence between your action and the simulation is not an ordinary causal dependence, nor even a spurious correlation – it is a logical dependence arising from the shared input-output structure. It is the same type of dependence that exists in the clone prisoner dilemma, where you can defect or cooperate with an individual you are assured is identical to you in every single way. When you take into account this logical dependence (also called subjunctive dependence), the answer is unambiguous: one-boxing is the way to go.

Summing up:

Things I remain conceptually confused about:

- Consciousness

- Decision theory & free will

- Objective priors

- Infinities in decision theory

- Fundamentality of causality

- Dependence of induction on concept choice

- Self-defeating beliefs

- Anthropic reasoning