(Nothing original, besides potentially this specific way of framing the concepts. This post started off short and ended up wayyy too long, and I don’t have the proper level of executive control to make myself shorten it significantly. So sorry, you’re stuck with this!)

Noam Chomsky in a recent interview said about the Republican Party:

I mean, has there ever been an organization in human history that is dedicated, with such commitment, to the destruction of organized human life on Earth? Not that I’m aware of. Is the Republican organization – I hesitate to call it a party – committed to that? Overwhelmingly. There isn’t even any question about it.

And later in the same interview:

… extermination of the species is very much an – very much an open question. I don’t want to say it’s solely the impact of the Republican Party – obviously, that’s false – but they certainly are in the lead in openly advocating and working for destruction of the human species.

In Chomsky’s mind, members of the Republican Party apparently sit in dark rooms scheming about how best to destroy all that is good and sacred.

I just watched the most recent Star Wars movie, and was struck by a sense of some relationship between the sentiment being expressed by Chomsky here and a statement made by Supreme Leader Snoke:

The seed of the Jedi Order lives. As long as he does, hope lives within the galaxy. I thought you would be the one to snuff it out.

There’s a really easy pattern of thought to fall into, which is something like “When things go wrong, it’s because of evil people doing evil things.”

It’s a really tempting idea. It diagnoses our societal problems as a simple “good guys vs bad guys” story – easy to understand and to convince others of. And it comes with an automatic solution, one that is very intuitive, simple, and highly self-gratifying: “Get rid of the bad guys, and just let us good guys make all the decisions!”

I think that the prevalence of this sort of story in the entertainment industry gives us some sort of evidence of its memetic power as a go-to explanation for problems. Think about how intensely the movie industry is optimizing for densely packed megadoses of gratifying storylines, visual feasts, appealing characters, and all the rest. The degree to which two and a half hours can be packed with constant intense emotional stimulation is fairly astounding.

Given this competitive market for appealing stories, it makes sense that we’d expect to gain some level of insight into the types of memes that we are most vulnerable to by looking at those types of stories and plot devices that appear over and over again. And this meme in particular, the theme of “social problems are caused by evil people,” is astonishingly universal across entertainment.

***

That this meme is wrong is the first of two big insights that I’ve been internalizing more and more in the past year. These are:

- When stuff goes wrong, or the world seems like it’s stuck in shitty and totally repairable ways, the only explanation is not evil people. In fact, this is often the least helpful explanation.

- Talking about the “motives” of an institution can be extremely useful. These motives can overpower the motives of the individuals that make up that institution, making them more or less irrelevant. In this way, we can end up with a description of institutions with weird desires and inclinations that are totally distinct from those of the people that make them up, and yet the institutions are in charge of what actually happens in the world.

On the second insight first: this is a sense in which institutions can be very very powerful. It’s not just the sense of powerful that means “able to implement lots of large-scale policies and cause lots of big changes”. It’s more like “able to override the desires of individuals within your range of influence, manipulating and bending them to your will.”

I was talking to my sister and her fiancé, both law students, about the US judicial system, and Supreme Court justices in particular. I wanted to understand what it is that really constrains the decisions of these highest judicial authorities; what are the forces that result in Justice Ginsberg writing the particular decision that she ends up writing.

What they ended up concluding is that there are essentially no such external forces.

Sure, there are ways in which Supreme Court justices can lose their jobs in principle, but this has never actually happened. And Congress can and does sometimes ignore Supreme Court decisions on statutory issues, but this doesn’t generally give the Justices any less reason to write their decision any differently.

What guides Justice Ginsberg is what she believes is right – her ideology – and perhaps legacy. In other words, purely internal forces. I wanted to think of other people in positions that allow similar degrees of power in ability to enact social change, and failed.

The first sense of power as ‘able to cause lots of things to happen” really doesn’t align with the second sense of ‘free from external constraints on your decision-making‘. An autocratic ruler might be plenty powerful in terms of ability to decide economic policy or assassinate journalists or wage war on neighboring states, but is highly constrained in his decisions by a tight incentive structure around what allows him to keep doing these things.

On the other hand, a Supreme Court justice could have total power to do whatever she personally desires, but never do anything remarkable or make any significant long-term impact on society.

The fact that this is so rare – that we could only think of a single example of a position like this – tells us about the way that powerful institutions are able to warp and override the individual motivations of the humans that compose them.

The rest of this post is on the first insight, about the idea that social problems are often not caused by evil people. There are two general things to say about evil people:

- I think that it’s often the case that “evil people” is a very surface-level explanation, able to capture some aspects of reality and roughly get at the problem, but not touching anywhere near the roots of the issue. One example of this may be when you ask people what the cause of the 2007 financial crisis was, and they go on about greedy bankers destroying America with their insatiable thirst for wealth.

While they might be landing on some semblance of truth there, they are really missing a lot of important subtlety in terms of the incentive structures of financial institutions, and how they led the bankers to behave in the way that they did. They are also very naturally led to unproductive “solutions” to the problems – what do we do, ban greed? No more bankers? Chuck capitalism? (Viva la revolución?) If you try to explain things on the deeper level of the incentive structures that led to “greedy banker” behavior, then you stand a chance of actually understanding how to solve the root problem and prevent it from recurring.

- Appeals to “evil people” can only explain a small proportion of the actual problems that we actually see in the world. There are a massive number of ways in which groups of human beings, all good people not trying to cause destruction and chaos or extinguish the last lights of hope in the universe, can end up steering themselves into highly suboptimal and unfortunate states.

My main goal in this post is to try to taxonomize these different causes of civilizational failure.

Previously I gave a barebones taxonomy of some of the reasons that low-hanging policy fruits might be left unplucked. Here I want to give a more comprehensive list.

***

I think a useful way to frame these issues is in terms of Nash equilibria. The worst-case scenario is where there are Pareto improvements all around us, and yet none of these improvements correspond to worlds that are in a Nash equilibrium. These are cases where the prospect of improvement seems fairly hopeless without a significant restructuring of our institutions.

Slightly better scenarios are where we have improvements that do correspond to a world in a Nash equilibrium, but we just happen to be stuck in a worse Nash equilibrium. So to start with, we have:

- The better world is not in a Nash equilibrium

- The better world is in a Nash equilibrium

I think that failures of the first kind are very commonly made amongst bright-eyed idealists trying to imagine setting up their perfect societies.

These types of failures correspond to questions like “okay, so once you’ve set up your perfect world, how will you assure that it stays that way?” and can be spotted in plans that involve steps like “well, I’m just assuming that all the people in my world are kind enough to not follow their incentives down this obvious path to failure.”

Nash equilibria correspond to stable societal setups. Any societal setup that is not in a Nash equilibrium can fairly quickly be expected to degenerate into some actually stable societal set-up.

The ways in which a given societal set up fails to be stable can be quite subtle and non-obvious, which I suspect is why this step is so often overlooked by reformers that think they see obvious ways to improve the world.

One of my favorite examples of this is the make-up problem. It starts with the following assumptions: (1) makeup makes people more attractive (which they want to be), and (2) an individual’s attractiveness is valued relative to the individuals around them.

Let’s now consider two societies, a make-up free society and a makeup-ubiquitous society. In both societies, everybody’s relative attractiveness is the same, which means that nobody is better off or worse off in one society over another on the basis of their attractiveness.

But the society in which everybody wears makeup is worse for everybody, because everybody has to spend a little bit of their money buying makeup. In other words, the makeup-free world represents a Pareto improvement over the makeup-ubiquitous world.

What’s worse; the makeup-free world is not in a Nash equilibrium, and the makeup-ubiquitous society is!

We can see this by imagining a society that starts makeup-free, and looking at the incentives of an individual within that society. This individual only stands to gain by wearing makeup, because she becomes more attractive relative to everybody else. So she buys makeup. Everybody else reasons the same way, so the make-up free society quickly degenerates into its equilibrium version, the makeup-ubiquitous society.

Sure, she can see that if everybody reasoned this way, then she will be worse off (she would have spent her money and gained nothing from it). But this reasoning does not help her. Why? Because regardless of what everybody else does, she is still better off wearing makeup.

If nobody wears makeup, then her relative attractiveness rises if she wears makeup. And if everybody else wears makeup, then her relative attractiveness rises if she wears makeup. It’s just that it’s rising from a lower starting point.

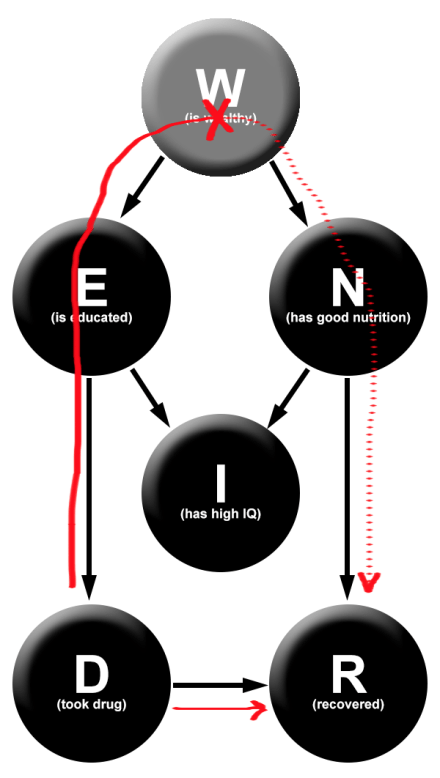

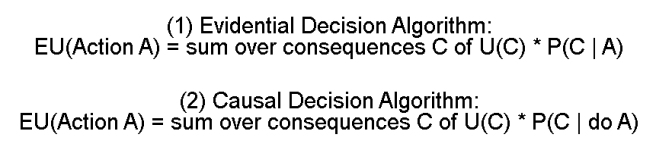

So no matter what society we start in, we end up in the suboptimal makeup-ubiquitous society. (I have to point out here that this is assuming a standard causal decision theory framework, which I think is wrong. Timeless decision theory will object to this line of reasoning, and will be able to maintain a makeup free equilibrium.)

We want to say “but just in this society assume that everybody is a good enough person to recognize the problem with makeup-wearing, and doesn’t do so!“

But that’s missing the entire point of civilization building – dealing with the fact that we will end up leaving non-Nash-equilibrium societal setups and degenerating in unexpected ways.

This failure mode arises because of the nature of positional goods, which are exactly what they sound like. In our example, attractiveness is a positional good, because your attractiveness is determined by looking at your position with respect to all other individuals (and yes this is a bit contrived and no I don’t think that attractiveness is purely positional, though I think that this is in part an actual problem).

To some degree, prices are also a positional good. If all prices fell tomorrow, then everybody would quickly end up with the same purchasing power as they had yesterday. And if everybody got an extra dollar to spend tomorrow, then prices would rise in response, the value of their money would decrease, and nobody would be better off (there are a lot of subtleties that make this not actually totally true, but let’s set that aside for the sake of simplicity).

Positional goods are just one example where we can naturally end up with our desired societies not being Nash equilibria.

The more general situation is just bad incentive structures, whereby individuals are incentivized to defect against a benevolent order, and society tosses and turns and settles at the nearest Nash equilibrium.

- The better world is not a Nash equilibrium

- Positional goods

- Bad incentive structures

- The better world is a Nash equilibrium

***

If the better world is in a Nash equilibrium, then we can actually imagine this world coming into being and not crumbling into a degenerate cousin-world. If a magical omniscient society-optimizing God stepped in and rearranged things, then they would likely stay that way, and we’d end up with a stable and happier world.

But there are a lot of reasons why all of us that are not magical society-optimizing Gods can do very little to make the changes that we desire. Said differently, there are many ways in which current Nash equilibria can do a great job of keeping us stuck in the existing system.

Three basic types of problems are (1) where the decision makers are not incentivized to implement this policy, (2) where valuable information fails to reach decision makers, and (3) where decision makers do have the right incentives and information, but fail because of coordination problems.

- The better world is not a Nash equilibrium

- Positional goods

- Bad incentive structures

- The better world is a Nash equilibrium

- You can’t reach it because you’re stuck in a lesser Nash equilibrium.

- Lack of incentives in decision makers

- Asymmetric information

- Coordination problems

Lack of incentives in decision makers can take many forms. The most famous of these occurs when policies result in externalities. This is essentially just where decision-makers do not absorb some of the consequences of a policy.

Negative externalities help to explain why behaviors that are net negative to society exist and continue (resulting in things like climate change and overfishing, for example), and positive externalities help to explain why some behaviors that would be net positive for society are not happening.

An even worse case of misalignment of incentives would be where the positive consequences on society would be negative consequences on decision-makers, or vice-versa. Our first-past-the-post voting system might be an example of this – abandoning FPTP would be great exactly because it allows us to remove the current set of decision-makers and replace them with a better set. This would great for us, but not so great for them.

I’m not aware of a name for this class of scenarios, and will just call it ‘perverse incentives.’

I think that this is also where the traditional concept of “evil people” would lie – evil people are those whose incentives are dramatically misaligned. This could mean that they are apathetic towards societal improvements, but typically fiction’s common conception of villains is individuals actively trying to harm society.

Lack of liquidity is another potential source of absent incentives. This is where there are plenty of individuals that do have the right incentives, but there is not enough freedom for them to actually make significant changes.

An example of this could be if a bunch of individuals all had the same idea for a fantastic new app that would perform some missing social function, and all know how to make the app, but are barred by burdensome costs of actually entering the market and getting the app out there.

The app will not get developed and society will be worse off, as a result of the difficulty in converting good app ideas to cash.

- Lack of incentives in decision makers

- Misalignment of incentives

- Externalities

- Perverse incentives

- Lack of liquidity

***

Asymmetric information is a well-known phenomenon that can lead societies into ruts. The classic example of this is the lemons problem. There are versions of asymmetric information problems in the insurance market, the housing market, the health care market and the charity market.

This deserves its own category because asymmetric information can bar progress, even when decision-makers have good incentives and important good policy ideas are out there.

- Lack of incentives in decision makers

- Misalignment of incentives

- Externalities

- Perverse incentives

- Lack of liquidity

- Asymmetric information

And of course, there are coordination problems. The makeup example given earlier is an example of a coordination problem – if everybody could successfully coordinate and avoid the temptation of makeup, then they’d all end up better off. But since each individual is incentivized to defect, the coordination attempts will break down.

Coordination problems generally occur when you have multi-step or multi-factor decision processes. I.e. when the decision cannot be unilaterally made by a single individual, and must be done as a cooperative effort between groups of individuals operating under different incentive structures.

A nice clear example of this comes from Eliezer Yudkowsky, who imagines a hypothetical new site called Danslist, designed to be a competitor to Craigslist.

Danslist is better than Craigslist in every way, and everybody would prefer that it was the site in use. The problem is that Craigslist is older, so everybody is already on that site.

Buyers will only switch to Danslist if there are enough sellers there, and sellers will only switch to Danslist if there are enough buyers there. This makes the decision to switch to Danslist a decision that is dependent on two factors, the buyers and the sellers.

In particular, an N-factor market is one where there are N different incentive structures that must interact for action to occur. In N-factor markets, the larger N is, the more difficult it is to make good decisions happen.

This is really important, because when markets are stuck in this way, inefficiencies arise and people can profit off of the sub-optimality of the situation.

So Craigslist can charge more than Danslist, while offering a worse service, as long as this doesn’t provide sufficient incentive for enough people to switch over.

Yudkowsky also talks about Elsevier as an instance of this. Elsevier is a profiteer that captured several large and prestigious scientific journals and jacked up subscription prices. While researchers, universities, and readers could in principle just unanimously switch their publication patterns to non-Elsevier journals, this involves solving a fairly tough coordination problem. (It has happened a few times)

One solution to coordination problems is an ability to credibly pre-commit. So if everybody in the makeup-ubiquitous world was able to sign a magical agreement that truly and completely credibly bound their future actions in a way that they couldn’t defect from, then they could end up in a better world.

When individuals cannot credibly pre-commit, then this naturally results in coordination problems.

And finally, there are other weird reasons that are harder to categorize for why we end up stuck in bad Nash equilibria.

For instance, a system in which politicians respond to the wills of voters and are genuinely accountable to them seems like a system with a nicely aligned incentive structure.

But if for some reason, the majority of the public resists policies that will actually improve their lives, or push policies that will hurt them, then this system will still end up in a failure mode. Perhaps this failure mode is not best expressed as a Nash equilibrium, as there is a sense in which voters do have the incentive to switch to a more sensible view, but I will express it as such regardless.

This looks to me like what is happening with popular opinion about minimum wage laws.

Huge amounts of people support minimum wage laws, including those that may actually lose their jobs as the result of those laws. While I’m aware that there isn’t a strong consensus among economists as to the real effects of a moderate minimum-wage increase, it is striking to me that so many people are so convinced that it can only be net positive for them, when there is plenty of evidence that it may not be.

Another instance of this is the idea of “wage stickiness”.

This is the idea that employers are more likely to fire their workers than to lower their wages, resulting in an artificial “stickiness” to the current wages. The proposed reason for why this is so is that worker morale is hurt more by decreased wages than by coworkers being fired.

Sticky wages are especially bad when you take into account inflation effects. If an economy has an inflation rate of 10%, then an employer that keeps her employees’ wages constant is in effect cutting their wages by 10%. Even if she raises their wages by 5%, they’re still losing money!

And if the economy enters a recession, with say an inflation rate of -5%, then an employer will have to cut wages by 5% in order to stay at the market equilibrium. But since wages are sticky and her workers won’t realize that they are actually not losing any money despite the wage cut, she will be more likely to fire workers instead.

A friend described to me an interaction he had had with a coworker at a manufacturing plant. My friend had been recently hired in the same position as this man, and was receiving the minimum wage at 5 dollars an hour.

His coworker was telling him about how he was being paid so much, because he had been working there so many years and was constantly getting pay raises. He was mortified when he compared wages with my friend, and found that they were receiving the exact same amount.

Status quo bias is another important effect to keep in mind here. Individuals are likely to favor the current status quo, for no reason besides that it is the status quo. This type of effect can add to political inertia and further entrench society in a suboptimal Nash equilibrium.

I’ll just lump all of these effects in as “Stupidity & cognitive biases.”

***

I want to close by adding a third category that I’ve been starting to suspect is more important than I previously realized. This is:

- The better world is in a Nash equilibrium, and you can reach it, and you will reach it, just WAIT a little bit.

I add this because I sometimes forget that society is a massive complicated beast with enormous inertia behind its existing structure, and that just because some favored policy of yours has not yet been fully implemented everywhere, this does not mean that there is a deep underlying unsolvable problem.

So, for instance, one time I puzzled for a couple weeks about why, given the apparently low cost of ending global poverty forever, it still exists.

Aren’t there enough politicians that are aware of the low cost? And aren’t they sufficiently motivated to pick up the windfall of public support and goodwill that they would surely get? (To say nothing of massively improving the world)

Then I watched Hans Rosling’s 2008 lecture “Don’t Panic” (which, by the way, should be required watching for everyone) and realized that global poverty is actually being ended, just slowly and gradually.

The UN set a goal in 2000 to completely end all world poverty by 2030. They’ve already succeeded in cutting it in half, and are five years ahead of their plan.

We’re on course to see the end of extreme poverty; it’ll just take a few more years. And after all, it should be expected that raising an entire segment of the world’s population above the poverty line will take some time.

So in this case, the answer to my question of “Why is this problem not being solved, if solutions exist?” was actually “Um, it is being solved, you’re just impatient.”

And earlier I wrote about overfishing and the ridiculously obvious solutions to the problem. I concluded by pessimistically noting that the fishing lobby has a significant influence over policy makers, which is why the problem cannot by solved.

While the antecedent of this is true, it is in fact the case that ITQ policies are being adopted in more and more fisheries, the Atlantic Northwest cod fisheries are being revived as a result of marine protection policies, and governments are making real improvements along this front.

This is a nice optimistic note to end on – the idea that not everything is a horrible unsolvable trap and that we can and do make real progress.

***

So we have:

- The better world is not a Nash equilibrium

- Positional goods

- Bad incentive structures

- The better world is a Nash equilibrium

- You can’t reach it because you’re stuck in a lesser Nash equilibrium.

- Lack of incentives in decision makers

- Misalignment of incentives

- Externalities

- Perverse incentives

- Lack of liquidity

- Asymmetric information

- Coordination problems

- Multi-factor markets

- Multi-step decision processes

- Inability to pre-commit

- Stupidity & cognitive biases

- You can and will reach it, just be patient.

I don’t think that this overall layout is perfect, or completely encompasses all failure modes of society. But I suspect that it is along the right lines of how to think about these issues. I’ve had conversations where people will say things like “Society would be better if we just got rid of all money” or “If somebody could just remove all those darned Republicans from power, imagine how much everything would improved” or “If I was elected dictator-for-life, I could fix all the world’s problems.”

I think that people that think this way are often really missing the point. It’s dead easy to look at the world’s problems, find somebody or something to point at and blame, and proclaim that removing them will fix everything. But the majority of the work you need to do to actually improve society involves answering really hard questions like “Am I sure that I haven’t overlooked some way in which my proposed policy degenerates into a suboptimal Nash equilibrium? What types of incentive structures naturally arise if I modify society in this way? How could somebody actually make this societal change from within the current system?”

That’s really the goal of this taxonomy – is to try to give a sense of what the right questions to be asking are.

(More & better reading along these same lines here and here.)