In classical logic there are exactly two truth values, True and False, and every sentence has exactly one of these truth values. Consider the Liar sentence: “This sentence is false.” If this sentence is True, then it must be False. And if it’s False, then it must not be False. If we apply classical logic to this sentence, then it must have one of the two truth values. But whichever way we go, we find ourselves in trouble. And so the Liar sentence glitches out classical logic and produces an error.

But not so fast. For the Liar sentence to glitch out classical logic, it must first be the case that the sentence can actually be produced in classical logic. We start with an English sentence “This sentence is not true” and reason intuitively about what classical logic would do with this sentence, but we must be able to form this sentence in classical logic in order for classical logic to have anything to say about it.

There are two major hurdles with importing “This sentence is not true” into classical logic: translating “this sentence” and translating “is not true”. The first requires us to be able to have self-referential sentences. It’s not so obvious to see how we accomplish this with the machinery of classical logic. The second requires us to have a truth predicate. This perhaps seems prima facie easier to do, but it turns out that it has its own host of issues.

Let’s deal with the second issue first: how do we make sense of “is not true”? We’ll start by looking at “is true” (once we have this, then we can just negate it to get “is not true”). So, how do we make sense of “is true”?

When we say “X is true”, we aren’t assigning equality between an object X and an object True. We’re instead attributing the property of truthiness to the sentence X. So “is true” seems to act kind of like a predicate in first-order logic. But there’s a problem. Predicates apply to objects in the domain of a model, not to sentences in the language itself. For instance, the predicate “is red” might apply to firetrucks and Clifford, as objects in the universe, but it’s not going to apply to sentences in the language.

With this in mind, it appears that “is true” is actually more like a modal operator. It applies to sentences, not objects. We could introduce a modal operator T such that TX is interpreted as “X is true”, and add an axiom schema that says for every sentence φ, φ ↔ Tφ. The problem with this is that we will never get self reference with this approach. We want to create a sentence P that says “P is not true”. We could try something like P ↔ ¬TP, but this ends up just being a false sentence, not paradoxical. What’s missing is that the sentence “P ↔ ¬TP” is not equivalent to the sentence P: they’re just different sentences.

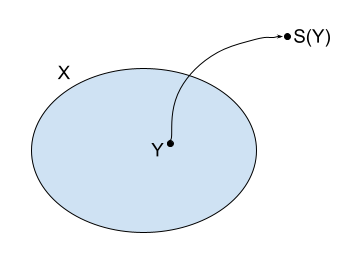

So the modal approach to interpreting “is true” has failed for our purposes. It’s simply not subtle enough to allow us to express self-reference. So let’s return to the predicate approach. The problem was that predicates apply to objects, not sentences. But what if the sentences were themselves objects? Of course, the sentences cannot literally be objects: they are purely syntactical items, whereas objects exist in the semantics (the interpretation of the language). But each sentence could have some sort of representative object in the domain.

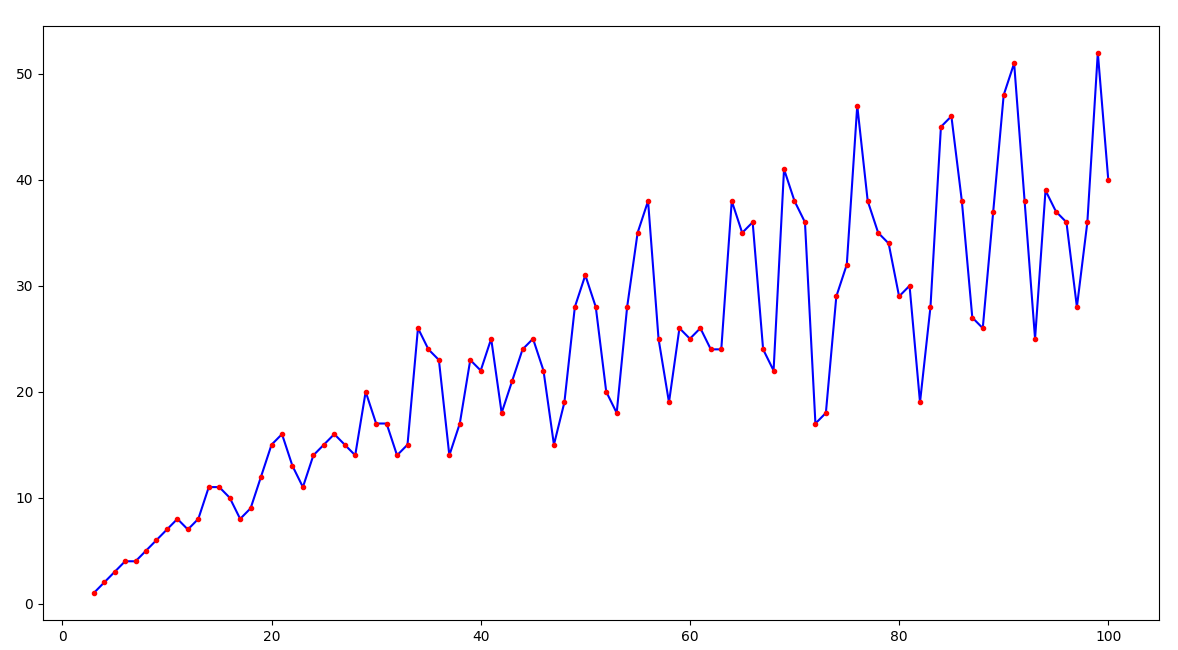

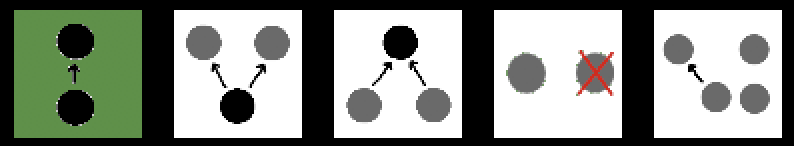

What Gödel showed is that this is indeed possible. He designed a coding technique such that every sentence in the language gets assigned a particular natural number, and no two sentences have the same number. And if sentences correspond to numbers, then properties of those sentences can be translated into properties of those numbers!

Now if our language is sufficiently expressive to talk about natural number arithmetic, then our sentences can express properties of other sentences! In other words, we want a theory in a logic that has ℕ as a model. And we also want it to be sufficiently expressive to be able to talk about properties of numbers, like “being prime” or “being twice-divisible by 7”. Then we can imagine a predicate True(x), such that True(x) is True if and only if the sentence encoded by the number x is True.

For notational convenience, we’ll write “the number that encodes the sentence P” as ⟦P⟧. Then what we want of our truth predicate is that for every sentence φ, True(⟦φ⟧) ↔ φ.

Now, returning to the Liar sentence, we’ve dealt with “is true”, but now have to deal with “this sentence”. Remember that we want a sentence φ that asserts that the truth predicate does not apply to itself. In other words, we want φ to be the same thing as ¬True(⟦φ⟧). But how can this be? Clearly these are two different sentences, no?

Well, it’s not so obvious that φ and ¬True(⟦φ⟧) are actually distinct sentences. Remember that ⟦φ⟧ is just some number. So the sentence φ might be ¬True(9129828475651384). This is only a genuine liar sentence if 9129828475651384 encodes the sentence φ.

So really what we need to do is to look for some natural number n such that the sentence encoded by n is exactly “¬True(n)”. This would be a sentence which if true must be false, and if false must be true. It’s not at all obvious that such a natural number exists. But in 1934, Carnap proved the diagonal lemma, the tool necessary to construct such a number.

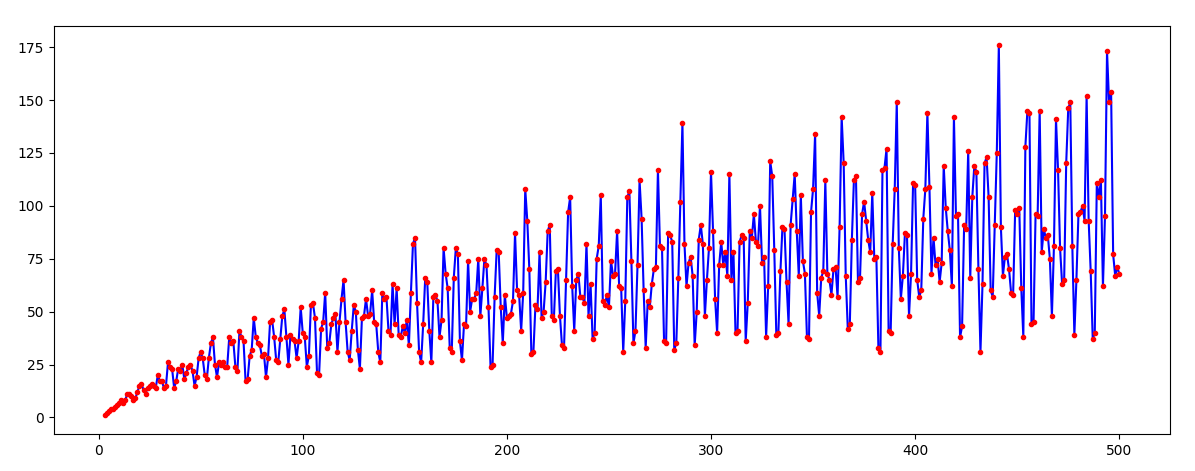

The diagonal lemma says that in any theory that can express natural number arithmetic (specifically, a theory that can define all primitive recursive functions), and for every predicate P(x), there’s a sentence ψ such that ψ ↔ P(⟦ψ⟧) is provable. Let P(x) be equal to ¬True(x), and we get that there’s a sentence ψ such that ψ ↔ ¬True(⟦ψ⟧) is provable!

In other words, there’s a sentence ψ encoded by a number n, such that ψ is true if and only if ¬True(n). This is exactly the liar paradox! We’ve succeeded at sneaking in a contradiction to classical logic! So what does this mean? Is classical logic ultimately all inconsistent? Do we need to rebuild logic from the ground up?

Not quite! Notice that to actually get to the point where we could express the Liar sentence, we had to take on a lot of assumptions. Let’s list them all out:

- Our language allows us to express natural number arithmetic.

- Our theory of natural numbers is strong enough to allow Gödel coding.

- Our theory of natural numbers is strong enough to express every primitive recursive function.

- There is a truth predicate.

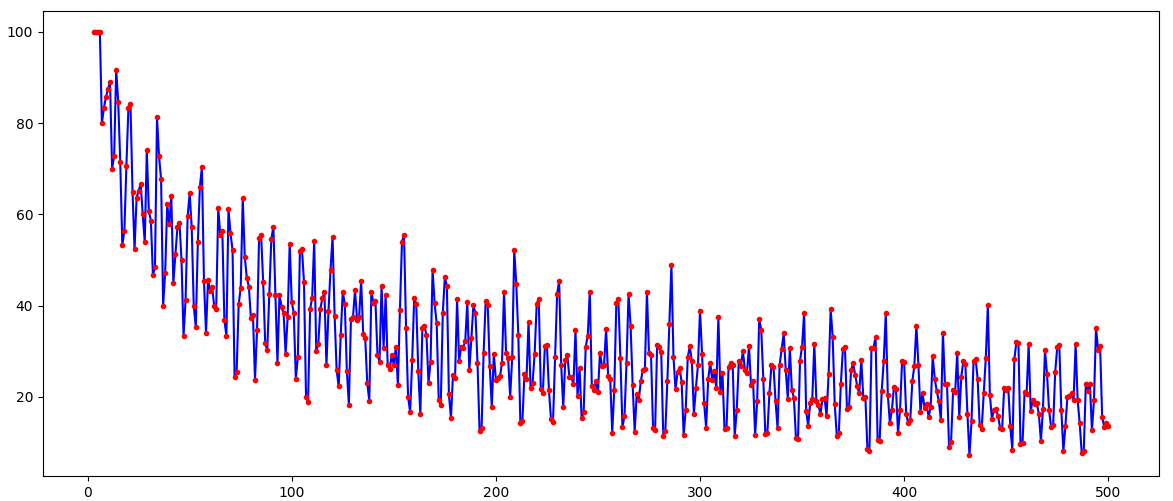

From these assumptions, we were able to prove an inconsistency. But this doesn’t mean that classical logic is therefore inconsistent! Rather, it means that any consistent theory has to violate at least one of these assumptions! In particular, if we have a consistent theory that allows us to do both Gödel coding and to express primitive recursive functions, then this theory cannot have a truth predicate!

It’s important to understand that #4 here really is an assumption. When I described a truth predicate, I said “we can imagine that such a predicate exists.” I never showed you how to explicitly construct it! We could always explicitly add in a truth predicate T to a theory of arithmetic, and then assert as axioms φ ↔ T(⟦φ⟧) for every sentence φ. And the above line of reasoning shows us that doing so will render our theory inconsistent. If we don’t explicitly add in a truth predicate, then we could try to construct it from primitive relation and function symbols of the language. But the above line of reasoning shows us that no matter how hard we try, we will never succeed in this construction!

It’s interesting to note that (2) and (3) are actually different assumptions. (3) implies (2), but (2) doesn’t imply (3). In other words, you can have very weak theories of arithmetic that are expressive enough to do Gödel coding, but not expressive enough to prove the diagonal lemma! The amazing feature of these theories is that it’s possible for them to prove their own consistency without becoming inconsistent!

Finally, notice that the diagonal lemma was quite a bit more powerful than what we strictly required for our reasoning above. In particular, it allowed us to talk about ANY predicate whatsoever, not just a truth predicate. Consider what happens when instead of using “is true” as our predicate, we use “is provable”. You might get a somewhat interesting result!