This post is about one of the things that I’ve been recently feeling confused about.

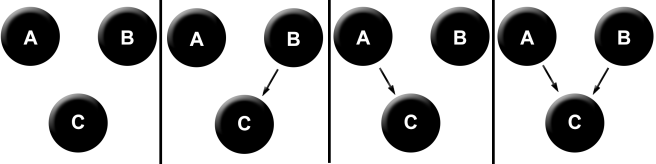

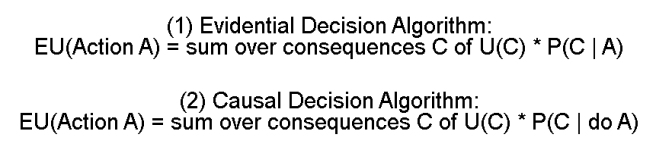

In a previous post, I described different decision theories as different algorithms for calculating expected utility. So for instance, the difference between an evidential decision theorist and a causal decision theorist can be expressed in the following way:

What I am confused about is that each decision theory involves a choice to designate some variables in the universe as “actions”, and all the others as “consequences.” I’m having trouble making a principled rule that tells us why some things can be considered actions and others not, without resorting to free will talk.

So for example, consider the following setup:

There’s a gene G in some humans that causes them to have strong desires for candy (D). This gene also causes low blood sugar (B) via a separate mechanism. Eating lots of candy (E) causes increased blood sugar. And finally, people have self-control (S), which help them not eat candy, even if they really desire it.

We can represent all of these relationships in the following diagram.

Now we can compare how EDT and CDT will decide on what to do.

If EDT looks at the expected utility of eating candy vs not eating candy, they’ll find both a negative dependence (eating candy makes a low blood sugar less likely), and a positive dependence (eating candy makes it more likely that you have the gene, which makes it more likely that you have a low blood sugar).

Let’s suppose that the positive dependence outweighs the low dependence, so that EDT ends up seeing that eating candy makes it overall more likely that you have a low blood sugar.

P(B | E) > P(B)

What does the CDT calculate? Well, they look at the causal conditional probability P(B | do E). In other words, they calculate their probabilities according to the following diagram.

Now they’ll see only a single dependence between eating candy (E) and having a low blood sugar (B) – the direct causal dependence. Thus, they end up thinking that eating candy makes them less likely to have a low blood sugar.

P(B | do E) < P(B)

This difference in how they calculate probabilities may lead them to behave differently. So, for instance, if they both value having a low blood sugar much more than eating candy, then the evidential decision theorist will eat the candy, and the causal decision theorist will not.

Okay, fine. This all makes sense. The problem with this is, both of them decided to make their decision on the basis of what value of E maximizes expected utility. But this was not their only choice!

They could instead have said, “Look, whether or not I actually eat the candy is not under my direct control. That is, the actual movement of my hand to the candy bar and the subsequent chewing and swallowing. What I’m controlling in this process is my brain state before and as I decide to eat the candy. In other words, what I can directly vary is the value of S – whether or not the self-controlled part of my mind tells me to eat the candy or not. The value of E that ends up actually obtaining is then a result of my choice of the value of S.”

If they had thought this way, then instead of calculating EU(E) and EU(~E), they would calculate EU(S) and EU(~S), and go with whichever one maximizes expected utility.

But now we get a different answer than before!

In particular, CDT and EDT are now looking at the same diagram, because when the causal decision theorist intervenes on the value of S, there are no causal arrows for them to break. This means that they calculate the same probabilities.

P(B | S) = P(B | do S)

And thus get the same expected utility values, resulting in them behaving the same way.

Furthermore, somebody else might argue “No, don’t be silly. We don’t only have control over S, we have control over both S, and E.” This corresponds to varying both S and E in our expected utility calculation, and choosing the optimal values. That is, they choose the actions that correspond to the max of the set { EU(S, E), EU(S, ~E), EU(~S, E), EU(~S, ~E) }.

Another person might say “Yes, I’m in control of S. But I’m also in control of D! That is, if I try really hard, I can make myself not desire things that I previously desired.” This person will vary S and D, and choose that which optimizes expected utility.

Another person will claim that they are in control of S, D, and E, and their algorithm will look at all eight combinations of these three values.

Somebody else might say that they have partial control over D. Another person might claim that they can mentally affect their blood sugar levels, so that B should be directly included in their set of “actions” that they use to calculate EU!

And all of these people will, in general, get different answers.

***

Some of these possible choices of the “set of actions” are clearly wrong. For instance, a person that says that they can by introspection change the value of G, editing out the gene in all of their cells, is deluded.

But I’m not sure how to make a principled judgment as to whether or not a person should calculate expected utilities varying S and D, varying just S, varying just E, and other plausible choices.

What’s worse, I’m not exactly sure how to rigorously justify why some variables are “plausible choices” for actions, and others not.

What’s even worse, when I try to make these types of principled judgments, my thinking naturally seems to end up relying on free-will-type ideas. So we want to say that we are actually in control of S, and in a sense we can’t really freely choose the value of D, because it is determined by our genes.

But if we extend this reasoning to its extreme conclusion, we end up saying that we can’t control any of the values of the variables, as they are all the determined results of factors that are out of our control.

If somebody hands me a causal diagram and tells me which variables they are “in control of”, I can tell them what CDT recommends them to do and what EDT recommends them to do.

But if I am just handed the causal diagram by itself, it seems that I am required to make some judgments about what variables are under the “free control” of the agent in question.

One potential way out of this is to say that variable X is under the control of agent A if, when they decide that they want to do X, then X happens. That is, X is an ‘action variable’ if you can always trace a direct link between the event in the brain of A of ‘deciding to do X’ and the actual occurrence of X.

Two problems that I see with this are (1) that this seems like it might be too strong of a requirement, and (2) that this seems to rely on a starting assumption that the event of ‘deciding to do X’ is an action variable.

On (1): we might want to say that I am “in control” of my desire for candy, even if my decision to diminish it is only sometimes effectual. Do we say that I am only in control of my desire for candy in those exact instances when I actually successfully determine their value? How about the cases when my decision to desire candy lines up with whether or not I desire candy, but purely by coincidence? For instance, somebody walking around constantly “deciding” to keep the moon in orbit around the Earth is not in “free control” of the moon’s orbit, but this way of thinking seems to imply that they are.

And on (2): Procedurally, this method involves introducing a new variable (“Decides X”), and seeing whether or not it empirically leads to X. After all, if the part of your brain that decides X is completely out of your control, then it makes as much sense to say that you can control X as to say that you can control the moon’s orbit. But then we have a new question, about how much this decision is under your control. There’s a circularity here.

We can determine if “Decides X” is a proper action variable by imagining a new variable “Decides (Decides X)”, and seeing if it actually is successful at determining the value of “Decides X”. And then, if somebody asks us how we know that “Decides (Decides X)” is an action variable, we look for a variable “Decides (Decides (Decides X))”. Et cetera.

How can we figure our way out of this mess?