Everybody knows that quantum mechanics is weird. But there are plenty of weird things in the world. We’ve pretty much come to expect that as soon as we look out beyond our little corner of the universe, we’ll start seeing intuition-defying things everywhere. So why does quantum mechanics get the reputation of being especially weird?

Bell’s theorem is a good demonstration of how the weirdness of quantum mechanics is in a realm of its own. It’s a set of proposed (and later actually verified) experimental results that seem to defy all attempts at classical interpretation.

The Experimental Results

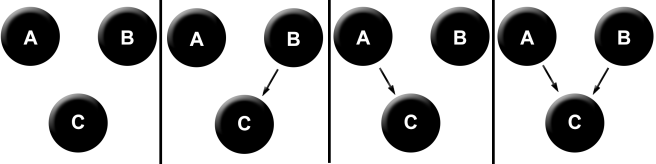

Here is the experimental setup:

In the center of the diagram, we have a black box that spits out two particles every few minutes. These two particles fly in different directions to two detectors. Each detector has three available settings (marked by 1, 2, and 3) and two bulbs, one red and the other green.

Shortly after a particle enters the detector, one of the two bulbs flashes. Our experiment is simply this: we record which bulb flashes on both the left and right detector, and we take note of the settings on both detectors at the time. We then try randomly varying the detector settings, and collect data for many such trials.

Quick comprehension test: Suppose that what bulb flashes is purely a function of some property of the particles entering the detector, and the settings don’t do anything. Then we should expect that changes in the settings will not have any impact on the frequency of flashing for each bulb. It turns out that we don’t see this in the experimental results.

One more: Suppose that the properties of the particles have nothing to do with which bulb flashes, and all that matters is the detector settings. What do we expect our results to be in this case?

Well, then we should expect that changing the detector settings will change which bulb flashes, but that the variance in the bulb flashes should be able to be fully accounted for by the detector settings. It turns out that this also doesn’t happen.

Okay, so what do we see in the experimental results?

The results are as follows:

(1) When the two detectors have the same settings:

The same color of bulb always flashes on the left and right.

(2) When the two detectors have different settings:

The same color bulb flashes on the left and right 25% of the time.

The same color bulb flashes on the left and right 75% of the time.

In some sense, the paradox is already complete. It turns out that some very minimal assumptions about the nature of reality tell us that these results are impossible. There is a hidden inconsistency within these results, and the only remaining task is to draw it out and make it obvious.

Assumptions

We’ll start our analysis by detailing our basic assumptions about the nature of the process.

Assumption 1: Lawfulness

The probability of an event is a function of all other events in the universe.

This assumption is incredibly weak. It just says that if you know everything about the universe, then you are able to place a probability distribution over future events. This isn’t even as strong as determinism, as it’s only saying that the future is a probabilistic function of the past. Determinism would be the claim that all such probabilities are 1 or 0, that is, the facts about the past fix the facts about the future.

From Assumption 1 we conclude the following:

There exists a function P(R | everything else) that accurately reports the frequency of the red bulb flashing, given the rest of facts about the universe.

It’s hard to imagine what it would mean for this to be wrong. Even in a perfectly non-deterministic universe where the future is completely probabilistically independent of the past, we could still express what’s going to happen next probabilistically, just with all of the probabilities of events being independent. This is why even naming this assumption lawfulness is too strong – the “lawfulness” could be probabilistic, chaotic, and incredibly minimal.

The next assumption constrains this function a little more.

Assumption 2: Locality

The probability of an event only depends on events local to it.

This assumption is justified by virtually the entire history of physics. Over and over we find that particles influence each others’ behaviors through causal intermediaries. Einstein’s Special Theory of Relativity provides a precise limitation on causal influences; the absolute fastest that causal influences can propagate is the speed of light. The light cone of an event is defined as all the past events that could have causally influenced it, given the speed of light limit, and all future events that can be causally influenced by this event.

Combining Assumption 1 and Assumption 2, we get:

P(R | everything else) = P(R | local events)

So what are these local events? Given our experimental design, we have two possibilities; the particle entering the detector, and the detector settings. Our experimental design explicitly rules out the effects of other causal influences, by holding them fixed. The only thing that we, the experimenters, vary are the detector settings, and the variation in the particle types being produced by the central black box. All else is stipulated to be held constant.

Thus we get our third, and final assumption.

Assumption 3: Good experimental design

The only local events relevant to the bulb flashing are the particle that enters the detector and the detector setting.

Combining these three assumptions, we get the following:

P(R | everything else) = P(R | particle & detector setting)

We can think of this function a little differently, by asking about a particular particle with a fixed set of properties.

Pparticle(R | detector setting)

We haven’t changed anything but the notation – this is the same function as what we originally had, just carrying a different meaning. Now it tells us how likely a given particle is to cause the red bulb to flash, given a certain detector setting. This allows us to categorize all different types of particles by looking at all different settings.

Particle type is defined by

( Pparticle(R | Setting 1), Pparticle(R | Setting 2), Pparticle(R | Setting 3) )

This fully defines our particle type for the purposes of our experiment. The set of particle types is the set of three-tuples of probabilities.

So to summarize, here are the only three assumptions we need to generate the paradox.

Lawfulness: Events happen with probabilities that are determined by facts about the universe.

Locality: Causal influences propagate locally.

Good experimental design: Only the particle type and detector setting influence the experiment result.

Now, we generate a contradiction between these assumptions and the experimental results!

Contradiction

Recall our experimental results:

(1) When the two detectors have the same settings:

The same color of bulb always flashes on the left and right.

(2) When the two detectors have different settings:

The same color bulb flashes on the left and right 25% of the time.

The same color bulb flashes on the left and right 75% of the time.

We are guaranteed by Assumptions 1 to 3 that there exists a function Pparticle(R | detector setting) that describes the frequencies we observe for a detector. We have two particles and two detectors, so we are really dealing with two functions for each experimental trial.

Left particle: Pleft(R | left setting)

Right particle: Pright(R | right setting)

From Result (1), we see that when left setting = right setting, the same color always flashes on both sides. This means two things: first, that the black box always produces two particles of the same type, and second, that the behavior observed in the experiment is deterministic.

Why must they be the same type? Well, if they were different, then we would expect different frequencies on the left and the right. Why determinism? If the results were at all probabilistic, then even if the probability functions for the left and right particles were the same, we’d expect to still see them sometimes give different results. Since they don’t, the results must be fully determined.

Pleft(R | setting 1) = Pright(R | setting 1) = 0 or 1

Pleft(R | setting 2) = Pright(R | setting 2) = 0 or 1

Pleft(R | setting 3) = Pright(R | setting 3) = 0 or 1

This means that we can fully express particle types by a function that takes in a setting (1, 2, or 3), and returns a value (0 or 1) corresponding to whether or not the red bulb will flash. How many different types of particles are there? Eight!

Abbreviation: Pn = P(R | setting n)

P1 = 1, P2 = 1, P3 = 1 : (RRR)

P1 = 1, P2 = 1, P3 = 0 : (RRG)

P1 = 1, P2 = 0, P3 = 1 : (RGR)

P1 = 1, P2 = 0, P3 = 0 : (RGG)

P1 = 0, P2 = 1, P3 = 1 : (GRR)

P1 = 0, P2 = 1, P3 = 0 : (GRG)

P1 = 0, P2 = 0, P3 = 1 : (GGR)

P1 = 0, P2 = 0, P3 = 0 : (GGG)

The three-letter strings (RRR) are short representations of which bulb will flash for each detector setting.

Now we are ready to bring in experimental result (2). In 25% of the cases in which the settings are different, the same bulbs flash on either side. Is this possible given our results? No! Check out the following table that describes what happens with RRR-type particles and RRG-type particles when the detectors have different settings different detector settings.

| (Setting 1, Setting 2) | RRR-type | RRG-type |

| 1, 2 | R, R | R, R |

| 1, 3 | R, R | R, G |

| 2, 1 | R, R | R, R |

| 2, 3 | R, R | R, G |

| 3, 1 | R, R | G, R |

| 3, 2 | R, R | G, R |

| 100% same | 33% same |

Obviously, if the particle always triggers a red flash, then any combination of detector settings will result in a red flash. So when the particles are the RRR-type, you will always see the same color flash on either side. And when the particles are the RRG-type, you end up seeing the same color bulb flash in only two of the six cases with different detector settings.

By symmetry, we can extend this to all of the other types.

| Particle type | Percentage of the time that the same bulb flashes (for different detector settings) |

| RRR | 100% |

| RRG | 33% |

| RGR | 33% |

| RGG | 33% |

| GRR | 33% |

| GRG | 33% |

| GGR | 33% |

| GGG | 100% |

Recall, in our original experimental results, we found that the same bulb flashes 25% of the time when the detectors are on different settings. Is this possible? Is there any distribution of particle types that could be produced by the central black box that would give us a 25% chance of seeing the same color?

No! How could there be? No matter how the black box produces particles, the best it can do is generate a distribution without RRRs and GGGs, in which case we would see 33% instead of 25%. In other words, the lowest that this value could possibly get is 33%!

This is the contradiction. Bell’s inequality points out a contradiction between theory and observation:

Theory: P(same color flash | different detector settings) ≥ 33%

Experiment: P(same color flash | different detector settings) = 25%

Summary

We have a contradiction between experimental results and a set of assumptions about reality. So one of our assumptions has to go. Which one?

Assumption 3: Experimental design. Good experimental design can be challenged, but this would require more detail on precisely how these experiments are done. The key feature of this is that you would have to propose a mechanism by which changes to the detector setting end up altering other relevant background factors that affect the experiment results. You’d also have to be able to do this for all the other subtly different variants of Bell’s experiment that give the same result. While this path is open, it doesn’t look promising.

Assumption 1: Lawfulness. Challenging the lawfulness of the universe looks really difficult. As I said before, I can barely imagine what a universe that doesn’t adhere to some version of Assumption 1 looks like. It’s almost tautological that some function will exist that can probabilistically describe the behavior of the universe. The universe must have some behavior, and why would we be unable to describe it probabilistically?

Assumption 2: Locality. This leaves us with locality. This is also really hard to deny! Modern physics has repeatedly confirmed that the speed of light acts as a speed limit on causal interactions, and that any influences must propagate locally. But perhaps quantum mechanics requires us to overthrow this old assumption and reveal it as a mere approximation to a deeper reality, as has been done many times before.

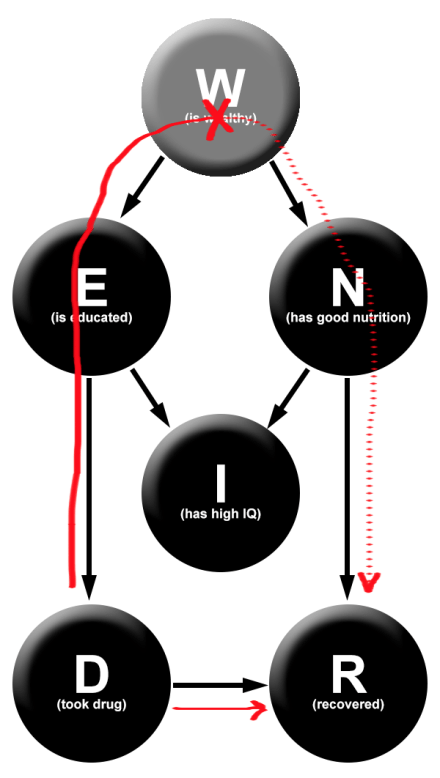

If we abandon number 2, we are allowing for the existence of statistical dependencies between variables that are entirely causally disconnected. Here’s Bell’s inequality in a causal diagram:

Since the detector settings on the left and the right are independent by assumption, we end up finding an unexplained dependence between the left particle and the right particle. Neither the common cause between them or any sort of subjunctive dependence a la timeless decision theory are able to explain away this dependence. In quantum mechanics, this dependence is given a name: entanglement. But of course, naming it doesn’t make it any less mysterious. Whatever entanglement is, it is something completely new to physics and challenges our intuitions about the very structure of causality.