Chapter 1: When Circularity is not Paradoxical

Circular definitions can be quite bad. Take the definition “a is the natural number that is equal to a”, or worse, “a is the natural number that is NOT equal to a”. Or, for an even more perverse one, “a+1, where a is the smallest number that can be defined in fewer than 50 words.” But just as not all self-reference is paradoxical, similarly not all circularity is vicious. Circular definitions form a crucial component of every area of math, where they go under the more polite term “recursion.”

The key component of a benign circular definition is that it avoids infinite regress. So “a=a” is unhelpful, because to evaluate a you must first know what a evaluates to. But what about “an = n ⋅ an-1, and a0 = 1″? Now to know a500, you only need to know a499, which in turn only requires you know a498, which requires… and so on, each step moving a little closer to the base case “a0 = 1″, at which point you can travel all the way back up the chain to discover that a500 = 500!. (That’s 500 factorial, not an excitable 500.)

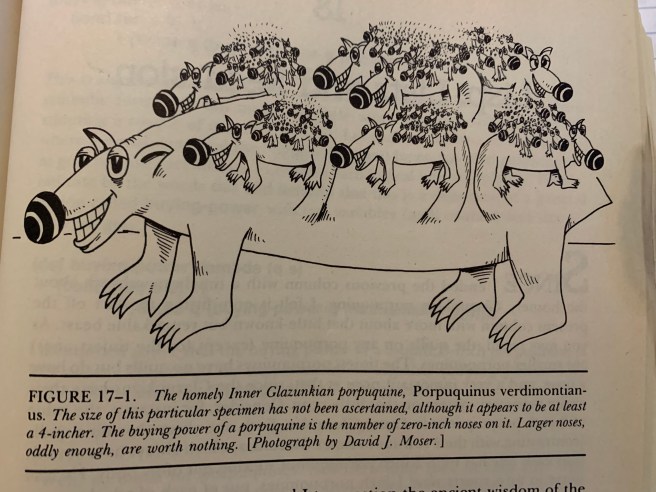

Douglas Hofstadter has a parable about the “porpuquine”, a rare cousin of the porcupine that grows smaller porpuquines on its back rather than quills. The market value of such a beast is determined by the total number of porpuquine noses you can find on it. And if you’re worried about an infinite regress, don’t fear! There’s a smallest porpuquine, which is entirely bald.

Recursive definitions are extremely nice when we want to define a function whose outputs are related to each other in a more simple way than they are to the inputs. Some examples (in which S stands for the successor function, which takes 0 to 1 to 2 to …):

Addition

∀x (x + 0 = x)

∀x∀y (x + S(y) = S(x+y))

Multiplication

∀x (x ⋅ 0 = 0)

∀x∀y (x ⋅ S(y) = x⋅y + x)

Notice the similarity between these two definitions. In each case we define what happens when we add 0 to a number, and then we define what happens when we add a successor to a number. This covers all the natural numbers! Let’s show this in action by proving that 9+3 = 12.

9 + 3

9 + S(2)

S(9 + 2)

S(9 + S(1))

S(S(9 + 1))

S(S(9 + S(0)))

S(S(S(9 + 0)))

S(S(S(9)))

S(S(10))

S(11)

12

The colorful step is where we hit our base case (evaluating x+0), at which point we need merely to pass our answers up the levels to get back to the solution of our original problem.

Another: Let’s show that 3⋅2 = 6.

3⋅2

3⋅S(1)

3⋅1 + 3

3⋅S(0) + 3

(3⋅0 + 3) + 3

(0 + 3) + 3

(0 + S(2)) + 3

S(0 + 2) + 3

S(0 + S(1)) + 3

S(S(0 + 1)) + 3

S(S(0 + S(0))) + 3

S(S(S(0 + 0))) + 3

S(S(S(0))) + 3

S(S(1)) + 3

S(2) + 3

3 + 3

3 + S(2)

S(3 + 2)

S(3 + S(1))

S(S(3 + 1))

S(S(3 + S(0)))

S(S(S(3 + 0)))

S(S(S(3)))

S(S(4))

S(5)

6

Each green line is where we use a “base case rule” – the first is the base case for multiplication, the second and third for addition. I like that you can see the complexity growing in the length of the line until you hit the base case, at which point the lines start shortening until we next need to apply the recursive step.

Chapter 2: Infinite Recursion

Now what happens if we want to add x + y but y is neither 0 nor a successor. “Huh? Isn’t every non-zero number a successor of some number?” I hear you ask. Not infinity! Or more precisely, not ω. Our recursive definitional scheme is all well and good for finite numbers, but it fails when we enter the realm of transfinite ordinals.

No problem! There’s an easy patch. The problem is that we don’t know how to add or multiply x and y when y is a limit ordinal. So we just add on an extra rule to cover this case!

Addition

α + 0 = α

α + S(β) = S(α + β)

α + λ = U{α + β | β ∈ λ}

Multiplication

α ⋅ 0 = 0

α ⋅ S(β) = α⋅β + α

α + λ = U{α ⋅ β | β ∈ λ}

If you’re scratching your head right now at the notion of “the union of a set of numbers” or “a number being an element of another number”, go read this for a thorough summary. In short, in ZFC we encode each number as the set of all smaller numbers (e.g. 3 = {0, 1, 2}), and ω (the first infinite number) is defined to be the set of all natural numbers (ω = {0, 1, 2, 3, …}). So 3 ⋃ 5 = {0,1,2} ⋃ {0,1,2,3,4} = {0,1,2,3,4} = 5. In general, the union of a set of numbers is just the maximum number among them (or if there is no maximum number, then it’s the supremum of all the numbers).

Outside the context of ZFC, we can equally write α + λ = lim (α + β) as β → λ–. We can also write α + λ = sup {α + β | β < λ}. And for those more familiar with ZFC, we don’t need to take the union over all β ∈ λ, we can make do with any cofinal subset (we’ll do this to simplify calculations greatly later in the post).

Now we’ve extended + and × into the infinite, and what we get is a little unusual. The first major sign that things have changed is that addition and multiplication are no longer commutative.

1 + ω = ⋃{1 + n: n ∈ ω} = U{1, 2, 3, 4, …} = ω

ω + 1 = ω + S(0) = S(ω + 0) = S(ω)

ω certainly doesn’t equal S(ω), so ω + 1 ≠ 1 + ω.

Distributivity also fails! For instance (ω + 3) ⋅ 2 = ω⋅2 + 3, not ω⋅2 + 6. But we don’t lose every nice algebraic feature. Associativity still holds for both addition and multiplication! I.e. α + (β + γ) = (α + β) + γ and α ⋅ (β ⋅ γ) = (α ⋅ β) ⋅ γ. This result is really important.

Earlier we showed that 1 + ω = ω. One can easily show that in general, n + ω = ω for any finite n, and the same holds for α + β is “infinitely smaller” than β. This notion of “infinitely smaller” is a little subtle; ω is not infinitely smaller than ω⋅2, but it is infinitely smaller than ω2. (It should be noted that everything up to here has been standard textbook stuff, but from here on I will be introducing original notions that I haven’t seen elsewhere. So treat what follows this with skepticism.)

A useful definition for us will be α << β (read as “α is infinitely less than β”) if α + β = β. We’ll also say that α and β are “comparable” if neither is infinitely smaller than the other: α ~ β if ¬(α << β) and ¬(β << α). Some conjectures I have about the comparability relation:

Conjecture 1: Commutativity holds between comparable ordinals.

Conjecture 2: α ~ β if and only if there’s some finite n such that α < β⋅n and β < α⋅n.

I’m actually fairly confident that Conjecture 1 is false, and would love to see a counterexample.

Here are some more useful identities for transfinite addition and multiplication:

Theorem 1: For finite n and limit ordinal λ, α⋅(λ + n) = α⋅λ + α⋅n

Proof sketch: α⋅(λ + n) = α⋅(λ + (n-1)) + α = α⋅(λ + (n-2)) + α + α = … = α⋅(λ + 0) + α + α + … + α = α⋅λ + α⋅n.

Theorem 2: For finite n and β << α, (α + β)⋅n = α⋅n + β.

Proof sketch: (α + β)⋅n = (α + β)⋅(n-1) + (α + β) = (α + β)⋅(n-2) + (α + β) + (α + β) = … = (α + β)⋅0 + (α + β) + … + (α + β) = (α + β) + (α + β) + … + (α + β) + (α + β) = α + (β + α) + (β + … + α) + β = α + α + α + … + α + β = α⋅n + β.

In case it’s not clear what’s going with this proof, the idea is that we expand (α + β)⋅n out to (α + β) + … + (α + β) (n times). Then we shift parentheses to enclose all the middle βs, making them look like (β + α). But then by assumption, since β << α, the β vanishes in each of these middle terms, leaving just the final one: α + α + α + … + α + β = α⋅n + β.

Infinite exponentiation, tetration and so on can be defined in the exact same way as addition and multiplication: first cover the 0 case, then the successor case, and finally the limit case. And the limit case is defined by just taking the union of all smaller cases.

Chapter 3: Transfinite Factorials

Now, what’s the first function you think of when you think of recursively defined functions? That’s right, the factorial! I mentioned it at the beginning of this post, long ago: n! = n⋅(n-1)! and 0! = 1. So let’s extend this definition to obtain a transfinite factorial!

Factorial

0! = 1

S(α)! = α! ⋅ S(α)

λ! = U{β! | β ∈ λ}

Now we can ask ω! is.

Theorem: ω! = ω

Proof: ω! = U{n! | n ∈ ω} = U{1, 2, 6, 24, 120, …} = ω (since {1, 2, 6, 24, 120, …} is a cofinal subset of ω).

Next, (ω+1)! = ω! ⋅ (ω + 1) = ω ⋅ (ω + 1) = ω⋅ω + ω⋅1 = ω2 + ω. Note that to prove this, we’ve used Theorem 1 from before.

And we can keep going from there.

Theorem: (ω + n)! = ωn+1 + ωn⋅n + ωn-1⋅(n – 1) + … + ω2⋅2 + ω.

Try to prove this for yourself!

Now that we know what (ω + n)! is for each finite n, we are able to calculate what (ω⋅2)! is (using the fact that {ω + n | n ∈ ω} is a cofinal subset of ω⋅2). I’ll write its value, as well as the next few interesting ordinals:

(ω⋅2)! = U{ω!, (ω+1)!, (ω+2)!, …} = ωω

(ω⋅3)! = ωω⋅2

(ω⋅n)! = ωω⋅(n-1)

Using the same trick as before, this enables us to solve (ω2)!

(ω2)! = U{ω!, (ω⋅2)!, (ω⋅3)!, …} = U{ω, ωω, ωω⋅2, ωω⋅3, …} = ωω2

From here, things suddenly take on a remarkable regularity (for a while at least). Looking at limit ordinals above ω2, they appear to have the following structure, at least up until we arrive at the first ordinal where standard notation fails us: ωωω… = ε0.

Conjecture: For λ a limit ordinal such that ω2 ≤ λ < ε0, λ! = ωλ

If this conjecture is right, then (ωω)! = ωωω, and (ωωω)! = ωωωω, and so on. We can also write this using tetration notation by saying that (nω)! = n+1ω.

But something different happens once we get to ε0!

Conjecture: ε0! = U{(nω)! | n ∈ ω} = U{ω, 2ω, 3ω, 4ω, …} = ε0

If this is right, then this is pretty interesting behavior! The fixed points of the factorial function are 1, ω, and ε0, at the very least. What happens after ε0? The factorial function “loops” in a certain sense! Just as (ω+1)! was ω2 + ω, so (ε0+1)! ends up being ε02 + ε0. And (ε0⋅2)! = ε0ε0, paralleling (ω⋅2)! = ωω. And so on.

Conjecture: εn is a fixed point of the factorial function for all n.

There are plenty more questions to be answered. Is ω1 another fixed point of the factorial function? What exactly does the list of all the fixed points look like? Are there any cardinals that aren’t equal to their own factorial? What exactly is the general pattern for factorials of successor ordinals? How does α! compare to αα in general?

Conjecture 1 is false for like w and w+1, conjecture 2 is true if you consider the contrapositive: if b>=aw, then b = aw+k for some ordinal k, so a+b = a+aw+k = aw+k = b is true.

LikeLike