Previously I talked about the arithmetic hierarchy for sets, and how it relates to the decidability of sets. There’s also a parallel notion of the arithmetic hierarchy for sentences of Peano arithmetic, and it relates to the difficulty of deciding the truth value of those sentences.

Truth value here and everywhere else in this post refers to truth value in the standard model of arithmetic. Truth value in the sense of “being true in all models of PA” is a much simpler matter; PA is recursively axiomatizable and first order logic is sound and complete, so any sentence that’s true in all models of PA can be eventually proven by a program that enumerates all the theorems of PA. So if a sentence is true in all models of PA, then there’s an algorithm that will tell you that in a finite amount of time (though it will run forever on an input that’s false in some models).

Not so for truth in the standard model! As we’ll see, whether a sentence evaluates to true in the standard model of arithmetic turns out to be much more difficult to determine in general. Only for the simplest sentences can you decide their truth value using an ordinary Turing machine. And the set of all sentences is in some sense infinitely uncomputable (you’ll see in a bit in what sense exactly this is).

What we’ll discuss is a way to convert sentences of Peano arithmetic to computer programs. Before diving into that, though, one note of caution is necessary: the arithmetic hierarchy for sentences is sometimes talked about purely syntactically (just by looking at the sentence as a string of symbols) and other times is talked about semantically (by looking at logically equivalent sentences). Here I will be primarily interested in the entirely-syntactic version of the arithmetic hierarchy. If you’ve only been introduced to the semantic version of the hierarchy, what you see here might differ a bit from what you recognize.

Let’s begin!

The simplest types of sentences have no quantifiers at all. For instance…

0 = 0

2 ⋅ 2 < 7

(2 + 2 = 4) → (2 ⋅ 2 = 4)

Each of these sentences can be translated into a program quite easily, since +, ⋅, =, and < are computable. We can translate the → in the third sentence by converting it into a conjunction:

## (2 + 2 = 4) → (2 ⋅ 2 = 4)

not(2 + 2 == 4 and not 2 * 2 == 4)Slightly less simple-looking are sentences with bounded quantifiers:

∀x < 10 (x + 0 = x)

∃x < 100 (x + x = x)

∀x < 5 ∃y < 7 (x > 1 → x⋅y = 12)

∃x < 5 ∀y < x ∀z < y (y⋅z ≠ x)

In each of these examples, the bounded quantifier could in principle be expanded out, leaving us with a finite quantifier-free sentence. This should suggest to us that adding bounded quantifiers doesn’t actually increase the computational difficulty.

We can translate sentences with bounded quantifiers into programs by converting each bounded quantifier to a for loop. The translation slightly differently depending on whether the quantifier is universal or existential:

def Aupto(n, phi):

for x in range(n):

if not phi(x):

return False

return Truedef Elessthan(n, phi):

for x in range(n):

if phi(x):

return True

return FalseNote that the second input needs to be a function; reflecting that it’s a sentence with free variables. Now we can quite easily translate each of the examples, using lambda notation to more conveniently define the necessary functions

## ∀x<10 (x + 0 = x)

Aupto(10, lambda x: x + 0 == x)

## ∃x<100 (x + x = x)

Elessthan(100, lambda x: x + x == x)

## ∀x<5 ∃y<7 ((x > 1) → (x*y = 12))

Aupto(5, lambda x: Elessthan(7, lambda y: not (x > 1 and x * y != 12)))

## ∃x<5 ∀y<x ∀z<y (y⋅z ≠ x)

Elessthan(5, lambda x: Aupto(x, lambda y: Aupto(y, lambda z: y * z != x)))Each of these programs, when run, determines whether or not the sentence is true. Hopefully it’s clear how we can translate any sentence with bounded quantifiers into a program of this form. And when we run the program, it will determine the truth value of the sentence in a finite amount of time.

So far, we’ve only talked about the simplest kinds of sentences, with no unbounded quantifiers. There are two names that both refer to this class: Π0 and Σ0. So now you know how to write a program that determines the truth value of any Σ0/Π0 sentence!

We now move up a level in the hierarchy, by adding unbounded quantifiers. These quantifiers must all appear out front and be the same type of quantifier (all universal or all existential).

Σ1 sentences: ∃x1 ∃x2 … ∃xk Phi(x1, x2, …, xk), where Phi is Π0.

Π1 sentences: ∀x1 ∀x2 … ∀xk Phi(x1, x2, …, xk), where Phi is Σ0.

Some examples of Σ1 sentences:

∃x ∃y (x⋅x = y)

∃x (x⋅x = 5)

∃x ∀y < x (x+y > x⋅y)

And some Π1 sentences:

∀x (x + 0 = x)

∀x ∀y (x + y < 10)

∀x ∃y < 10 (y⋅y + y = x)

We can translate unbounded quantifiers as while loops:

def A(phi):

x = 0

while True:

if not phi(x):

return False

x += 1

def E(phi):

x = 0

while True:

if phi(x):

return True

x += 1There’s a radical change here from the bounded case, which is that these functions are no longer guaranteed to terminate. A(Φ) never returns True, and E(Φ) never returns False. This reflects the nature of unbounded quantifiers. An unbounded universal quantifier is claiming something to be true of all numbers, and thus there are infinitely many cases to be checked. Of course, the moment you find a case that fails, you can return False. But if the universally quantified statement is true of all numbers, then the function will have to keep searching through the numbers forever, hoping to find a counterexample. With an unbounded existential quantifier, all one needs to do is find a single example where the statement is true and then return True. But if there is no such example (i.e. if the statement is always false), then the program will have to search forever.

I encourage you to think about these functions for a few minutes until you’re satisfied that not only do they capture the unbounded universal and existential quantifiers, but that there’s no better way to define them.

Now we can quite easily translate our example sentences as programs:

## ∃x ∃y (x⋅x = y)

E(lambda x: E(lambda y: x * x == y))

## ∃x (x⋅x = 5)

E(lambda x: x * x == 5)

## ∃x ∀y < x (x+y > x⋅y)

E(lambda x: Aupto(x, lambda y: x + y > x * y))

## ∀x (x + 0 = x)

A(lambda x: x + 0 == x)

## ∀x ∀y (x + y < 10)

A(lambda x: A(lambda y: x + y < 10))

## ∀x ∃y < 10 (y⋅y + y = x)

A(lambda x: Elessthan(10, y * y + y == x))The first is a true Σ1 sentence, so it terminates and returns True. The second is a false Σ1 sentence, so it runs forever. See if you can figure out if the third ever halts, and then run the program for yourself to see!

The fourth is a true Π1 sentence, which means that it will never halt (it will keep looking for a counterexample and failing to find one forever). The fifth is a false Π1 sentence, so it does halt at the first moment it finds a value of x and y whose sum is 10. And figure out the sixth for yourself!

The next level of the hierarchy involves alternating quantifiers.

Σ2 sentences: ∃x1 ∃x2 … ∃xk Φ(x1, x2, …, xk), where Φ is Π1.

Π2 sentences: ∀x1 ∀x2 … ∀xk Φ(x1, x2, …, xk), where Φ is Σ1.

So now we’re allowed sentences with a block of one type of unbounded quantifier followed by a block of the other type of unbounded quantifier, and ending with a Σ0 sentence. You might guess that the Python functions we’ve defined already are strong enough to handle this case (and indeed, all higher levels of the hierarchy), and you’re right. At least, partially. Try running some examples of Σ2 or Π2 sentences and see what happens. For example:

## ∀x ∃y (x > y)

A(lambda x: E(lambda y: x > y))It runs forever! If we were to look into the structure of this program, we’d see that A(Φ) only halts if it finds a counterexample to Φ, and E(Φ) only halts if it finds an example of Φ. In other words A(E(Φ)) only halts if A finds out that E(Φ) is false; but E(Φ) never halts if it’s false! The two programs’ goals are diametrically opposed, and as such, brought together like this they never halt on any input.

The same goes for a sentence like ∃x ∀y (x > y): for this program to halt, it would require that ∀y (x > y) is found to be true for some value of x, But ∀y (x > y) will never be found true, because universally quantified sentences can only be found false! This has nothing to do with the (x > y) being quantified over, it’s entirely about the structure of the quantifiers.

No Turing machine can decide the truth values of Σ2 and Π2 sentences. There’s a caveat here, related to the semantic version of the arithmetic hierarchy. It’s often possible to take a Π2 sentence like ∀x ∃y (y + y = x) and convert it to a logically equivalent but Π1 sentence like ∀x ∃y<x (y + y = x). This translation works, because y + y = x is only going to be true if y is less than or equal to x. Now we have a false Π1 sentence rather than a false Π2 sentence, and as such we can find a counterexample and halt.

We can talk about a sentence’s essential level on the arithmetic hierarchy, which is the lowest level of the logically equivalent sentence. It’s important to note here that “logically equivalent sentence” is a cross-model notion: A and B are logically equivalent if and only if they have the same truth values in every model of PA, not just the standard model. The soundness and completeness of first order logic, and the recursive nature of the axioms of PA, tells us that the set of sentences that are logically equivalent to a given sentence of PA is recursively enumerable. So we can generate these sentences by searching for PA proofs of equivalence and keeping track of the lowest level of the arithmetic hierarchy attained so far.

Even when we do this, we will still find sentences that have no logical equivalents below Σ2 or Π2. These sentences are essentially uncomputable; not just uncomputable in virtue of their form, but truly uncomputable in all of their logical equivalents. However, while they are uncomputable, they would become computable if we had a stronger Turing machine. Let’s take another look at the last example:

## ∀x ∃y (x > y)

A(lambda x: E(lambda y: x > y))Recall that the problem was that A(E(Φ)) only halts if E(Φ) returns False, and E(Φ) can only return True. But if we had a TM equipped with an oracle for the truth value of E(Φ) sentences, then maybe we could evaluate A(E(Φ))!

Let’s think about that for a minute more. What would an oracle for the truth value of Σ1 sentences be like? One thing that would work is if we could run E(Φ) “to infinity” and see if it ever finds an example, and if not, then return False. So perhaps an infinite-time Turing machine would do the trick. Another way would be if we could simply ask whether E(Φ) ever halts! If it does, then ∃y (x > y) must be true, and if not, then it must be false.

So a halting oracle suffices to decide the truth values of Σ1 sentences! Same for Π1 sentences: we just ask if A(Φ) ever halts and return False if so, and True otherwise.

If we run the above program on a Turing machine equipped with a halting oracle, what will we get? Now we can evaluate the inner existential quantifier for any given value of x. So in particular, for x = 0, we will find that Ey (x > y) is false. We’ve found a counterexample, so our program will terminate and return False.

On the other hand, if our sentence was true, then we would be faced with the familiar feature of universal quantifiers: we’d run forever looking for a counterexample and never find one. So to determine that this sentence is true, we’d need an oracle for the halting problem for this new more powerful Turing machine!

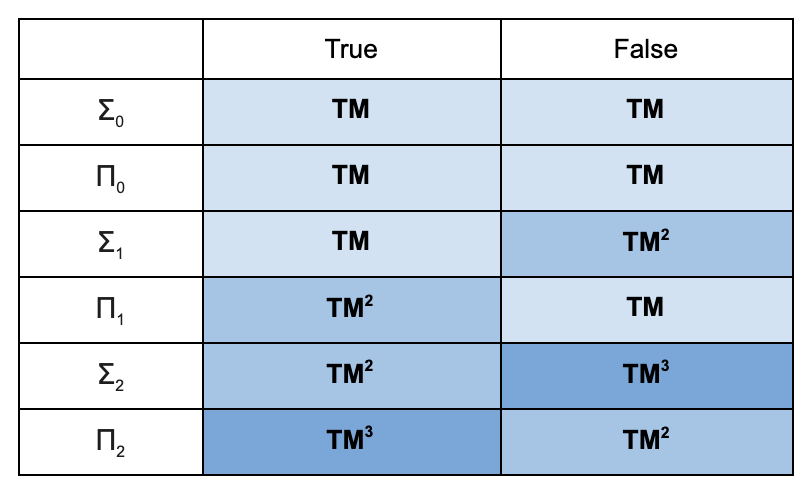

Here’s a summary of what we have so far:

TM = Ordinary Turing Machine

TM2 = TM + oracle for TM

TM3 = TM + oracle for TM2

The table shows what type of machine suffices to decide the truth value of a sentence, depending on where on the arithmetic hierarchy the sentence falls and whether the sentence is true or false.

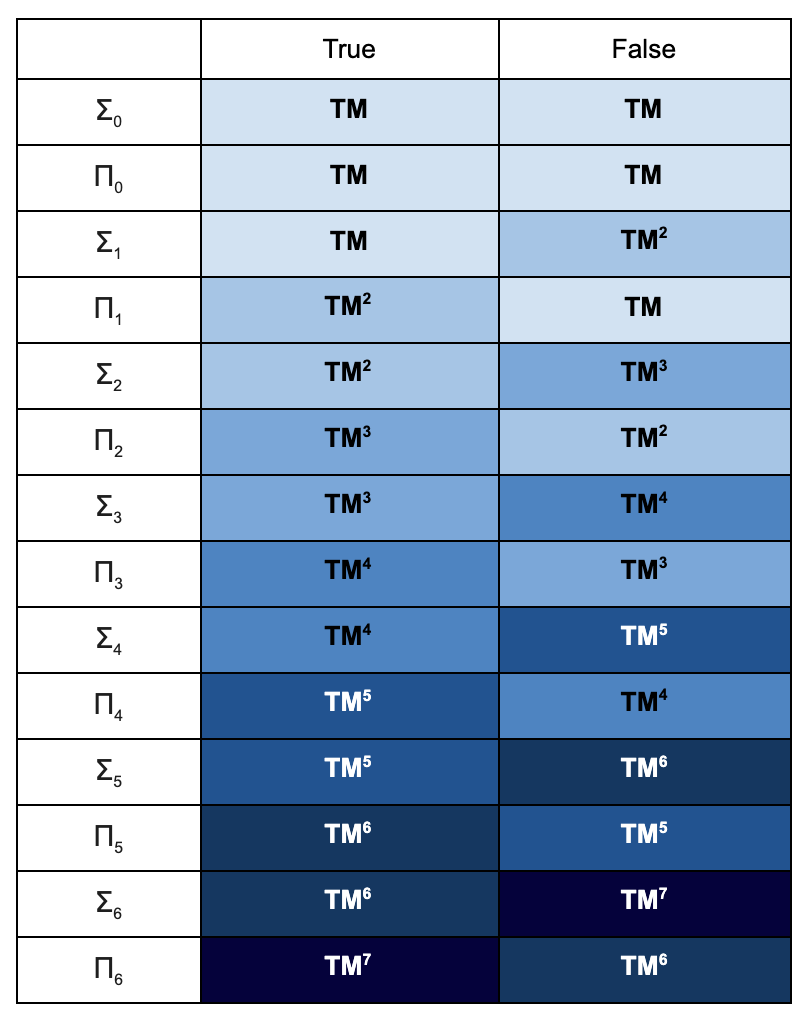

We’re now ready to generalize. In general, Σn sentences start with a block of existential quantifiers, and then alternate between blocks of existential and universal quantifiers n – 1 times before ending in a Σ0 sentence. Πn sentences start with a block of universal quantifiers, alternates quantifiers n – 1 times, and then ends in a Σ0 sentence. And as you move up the arithmetic hierarchy, it requires more and more powerful halting oracles to decide whether sentences are true:

If we define Σω to be the union of all the Σ classes in the hierarchy, and Πω the union of the Π classes, then deciding the truth value of Σω ⋃ Πω (the set of all arithmetic sentences) would require a TMω – a Turing machine with an oracle for TM, TM2, TM3, and so on. Thus the theory of true arithmetic (the set of all first-order sentences that are true of ℕ), is not only undecidable, it’s undecidable with a TM2, TM3, and TMn for every n ∈ ℕ. At every level of the arithmetic hierarchy, we get new sentences that are essentially on that level (not just sentences that are superficially on that level in light of their syntactic form, but sentences which, in their simplest possible logically equivalent form, lie on that level).

This gives some sense of just how hard math is. Just understanding the first-order truths of arithmetic requires an infinity of halting oracles, each more powerful than the last. And that says nothing about the second-order truths of arithmetic! That would require even stronger Turing machines than TMω – Turing machines that have halting oracles for TMω, and then TMs with oracles for that, and so on to unimaginable heights (just how high we must go is not currently known).