The analytic tableaux (also called truth tree) style of proof system is really nice. The tree structure tends to resemble the actual process of mathematical reasoning better than the Hilbert-style linear proof systems, and I find that the formal proofs are much easier to follow. For first-order logic (without equality), the system involves four new inference rules to deal with quantifiers. There are a few different logically equivalent choices for these rules, but here is my favorite:

Universal instantiation ∀

∀x φ(x)

⊢

φ(t)

(where t is any closed term – any term without free variables)

Existential instantiation ∃

∃x φ(x)

⊢

φ(a)

(where a is any new constant)

Negated universal ¬∀

¬∀x φ

⊢

∃x ¬φ

Negated existential ¬∃

¬∃x φ

⊢

∀x ¬φ

The instantiation rules are actually quite interesting. Suppose that you’re working in the language of ZFC. ZFC has no constant or function symbols, only a single binary relation symbol: ∈, interpreted as “is an element of”. This seems to raise a problem for using the instantiation rules. For universal instantiation, we can only apply it to closed terms. But a language with no constants has no closed terms! And looking at existential instantiation, there’s a similar problem: we’re supposed to derive φ(a), where a is a new constant, but our language has no constants!

So what’s up? Does the standard first-order analytical tableaux approach not work for simple languages like ZFC? Insofar as ZFC is a shining beacon of what first-order logic can accomplish, this is a little disconcerting.

Actually, the instantiation rules work exactly as stated for ZFC. The subtlety in their interpretation is that when running proofs, you are allowed to (and in fact, often must) expand the language. So when you do existential instantiation, you don’t choose a constant symbol that already exists inside your language. Instead, you add a totally new constant symbol to your language, and then declare that φ holds of this symbol. Similarly, you can do universal instantiation in a language with no closed terms, simply by expanding the language to include some constants.

Now, if we’re expanding the language in the course of the proof, then who’s to say that in the end our proof will still be valid for our original language? Well, suppose we’re working in some minimal language L that has no constants. Let T be our background theory (the set of non-tautologies that we’re taking as assumptions). When we do existential instantiation, we always create a new constant. Let’s call it a. We then expand the language into L’ = L ⋃ {a}, and we expand our assumptions to T’ = T ⋃ {φ(a)}. When we do universal instantiation, we either use an existing closed term or create a new one. In the second case, we create a new constant b and form a new language L’ = L ⋃ {b}. We also expand our assumptions to a new set T’ = T ⋃ {φ(b)}.

The important thing to note is that we haven’t done anything that invalidates any models of T! If T originally contained a sentence of the form ∀x φ(x), then adding c and declaring φ(c) doesn’t conflict with this. And if T originally contained a sentence of the form ∃x φ(x), then in every model of T at least one thing satisfies φ. So when we add a new constant c, that constant can just refer back to any of these φ-satisfiers.

You might think: “But hold on! How can we be sure that it’s safe to just add new constants? Couldn’t we expand the domain too much, in a way that’s inconsistent with our original theory?” The answer to this is that the domain doesn’t have to expand to accommodate new constants! These constants can refer to existing elements of the domain. For instance, suppose T contains six existential statements and a sentence that says there are exactly five objects. Each time we run existential instantiation on one of the six existential sentences, we create a new constant. So we’ll get six new constants. But these constants can refer to the same value! And since our theory already says that there are five objects, the models of our expanded theory will also contain exactly five objects, meaning that in every model of our original theory, the new constants will refer to elements of the domain that already exist. No domain expansion!

Ok, but how do we know that there isn’t some very complicated sentence ψ that was originally true in every model of T, but becomes false in some models of T’? To fully prove that this never happens, we could do induction over all sentences in the language, showing that any sentence that is entailed by T is also entailed by T’. But considering the details of the expansion process, and trying to come up with ways that the expansion might fail, is a good way to get an intuition for why this proof system works.

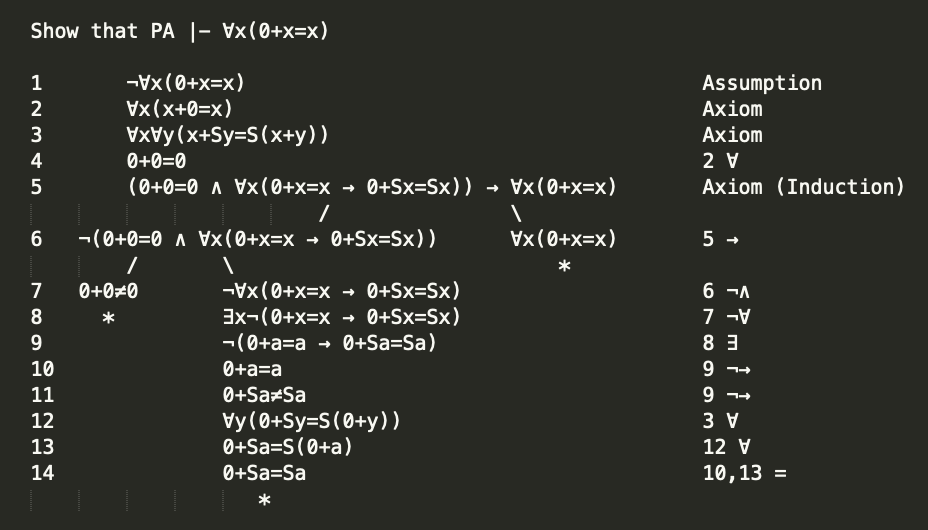

I’ll close with a few examples of using analytic tableaux to prove basic results in PA and ZFC. Think of this as a proof of concept! (Each of these proofs also uses some rules regarding equality, which are obvious enough despite that I haven’t explicitly defined them).

First, we show that PA proves ∀x (0 + x = x). This is nontrivial, despite that ∀x (x + 0 = x) is an axiom!

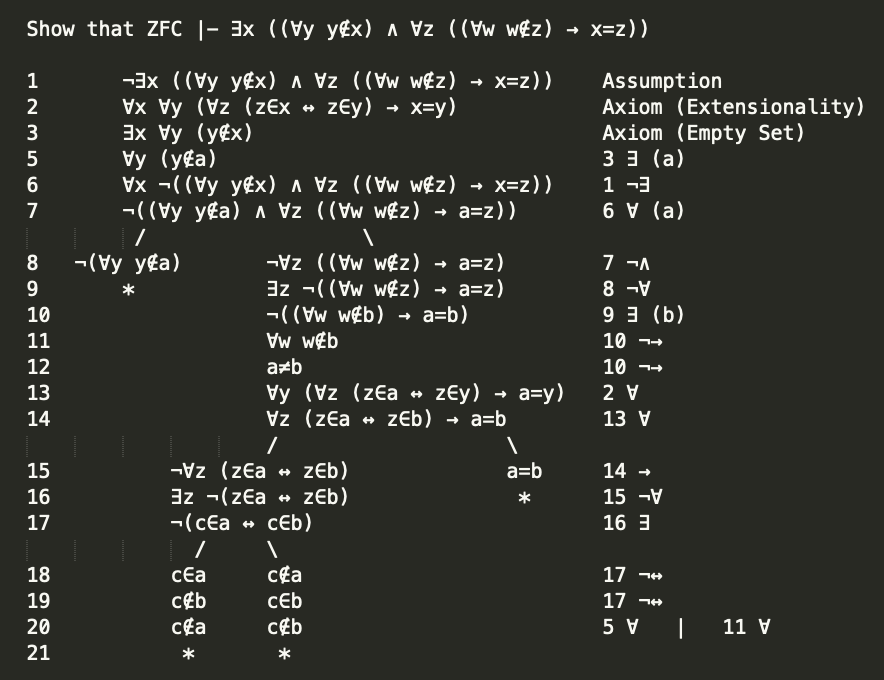

Next, a proof from ZFC that the empty set exists and is unique:

Now a proof from PA that addition is commutative. This one is shockingly complicated for such a simple concept, and requires three uses of induction, but this goes to show how basic the axioms of PA really are! If you look closely, you’ll also notice that this proof replicates the proof of ∀x (0 + x = x) from above!

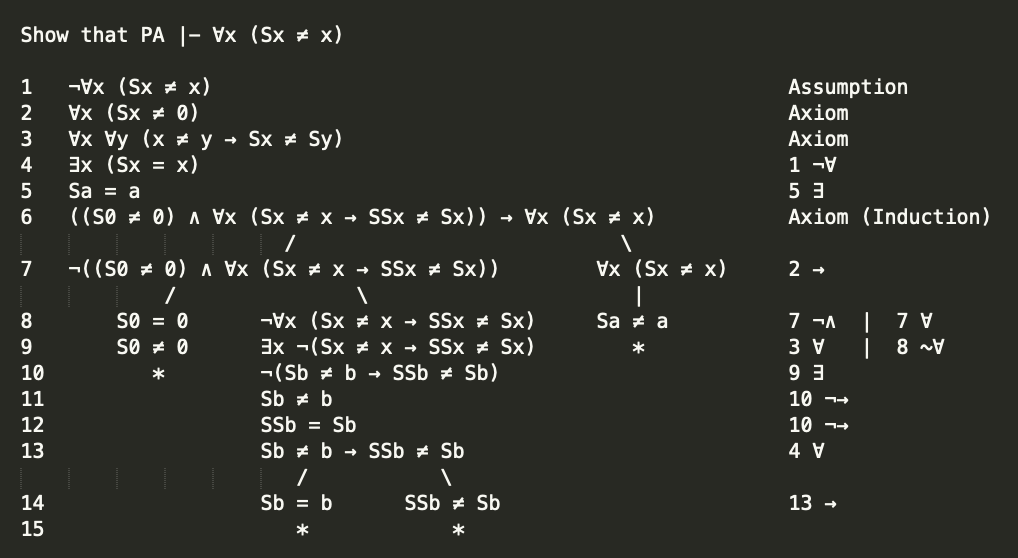

Final example, here’s a PA proof that no number is its own successor. This rules out nonstandard models of arithmetic with one nonstandard number!