Morality is one of those weird areas where I have an urge to systematize my intuitions, despite believing that these intuitions don’t reflect any objective features of the world.

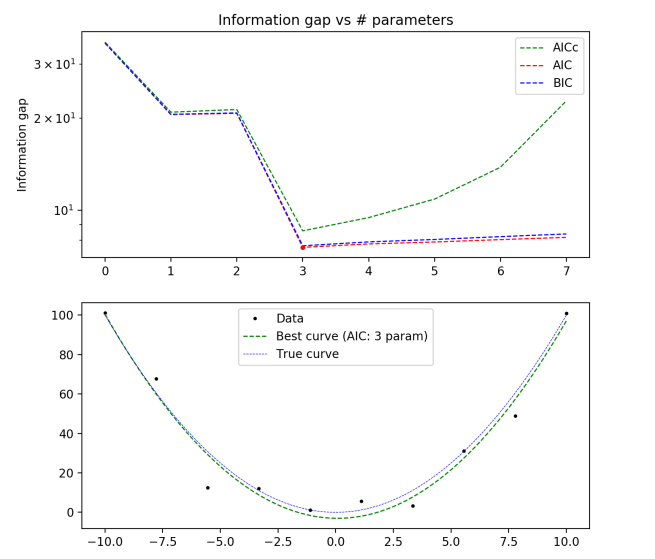

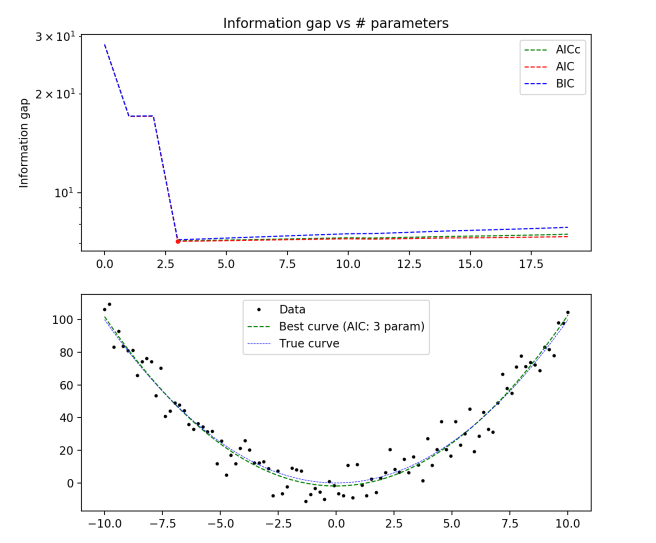

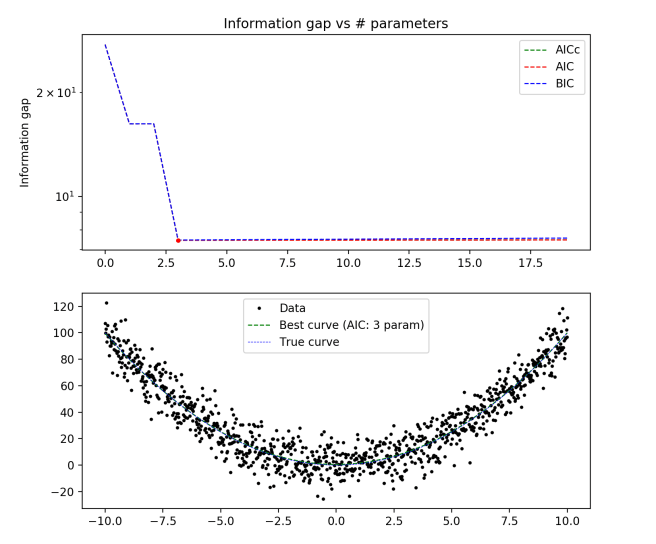

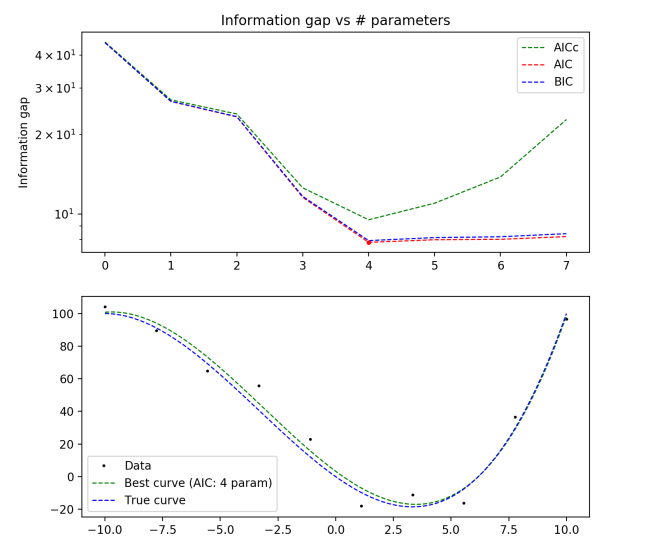

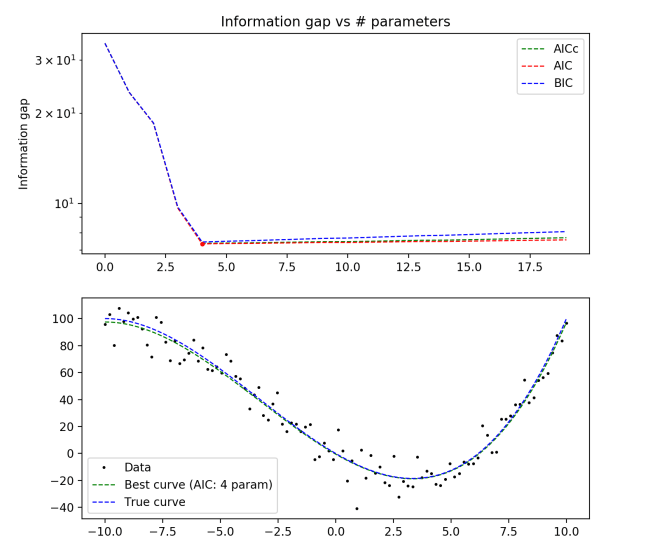

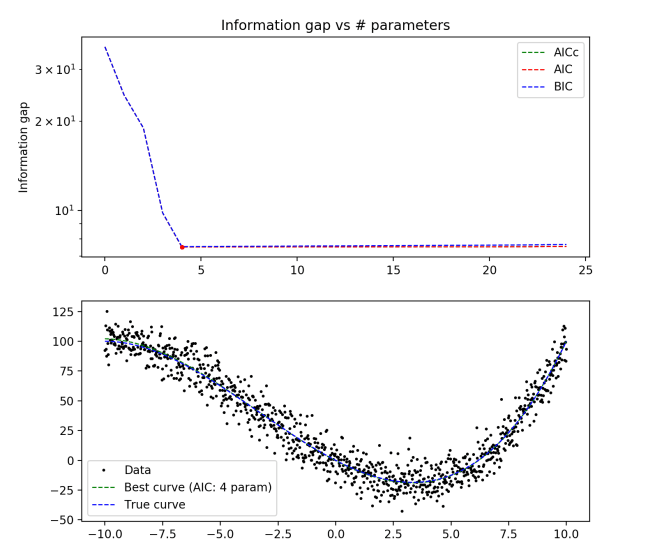

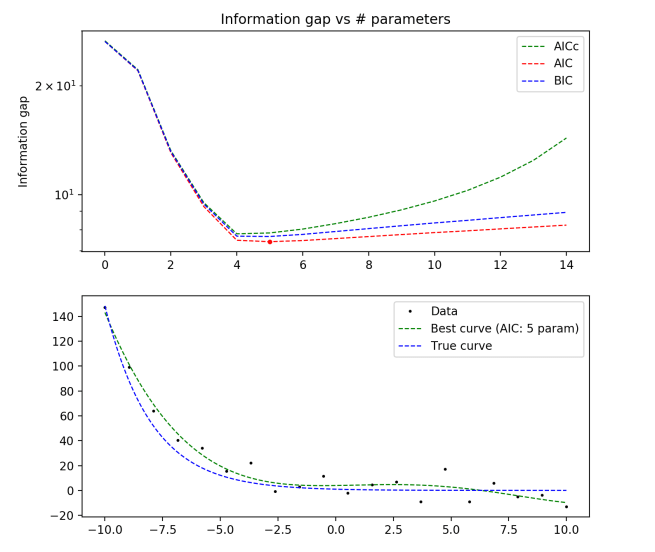

In the language of model selection, it feels like I’m trying to fit the data of my moral intuitions to some simple underlying model, and not overfit to the noise in the data. But the concept of “noise” here makes little sense… if I were really a moral nihilist, then I would see the only sensible task with respect to ethics as a descriptive task: describe my moral psychology and the moral psychology of others. If ethics is like aesthetics, fundamentally a matter of complex individual preferences, then there is no reality to be found by paring down your moral framework into a tight neat package.

You can do a good job at analyzing how your moral cognitive system works and trying to understand the reasons that it is the way it is. But once you’ve managed a sufficiently detailed description of your moral intuitions, then it seems like you’ve exhausted the realm of interesting ethical thinking. Any other tasks seem to rely on some notion of an actual moral truth out there that you’re trying to fit your intuitions to, or at least a notion of your “true” moral beliefs as a simple set of principles from which your moral feelings and intuitions arise.

Despite the fact that I struggle to see any rational reason for systematize ethics, I find myself doing so fairly often. The strongest systematizing urge I feel in analyzing ethics is the urge towards generality. A simple general description that successfully captures many of my moral intuitions feels much better than a complicated high-order description of many disconnected intuitions.

This naturally leads to issues with consistency. If you are satisfied with just describing your moral intuitions in every situation, then you can never really be faced with accusations of inconsistency. Inconsistency arises when you claim to agree with a general moral principle, and yet have moral feelings that contradict this principle.

It’s the difference between ‘It was unjust when X shot Y the other day in location Z” and “It is unjust for people to shoot each other”. The first doesn’t entail any conclusions about other similar scenarios, while the second entails an infinity of moral beliefs about similar scenarios.

Now, getting to utilitarianism. Utilitarianism is the (initially nice-sounding) moral principle that moral action is that which maximizes happiness (/ well-being / sentient flourishing / positive conscious experiences). In any situation, the moral calculation done by a utilitarian is to impartially consider the consequences of all possible actions on the happiness of all other conscious beings, and then take the action that maximizes your expected value.

While the basic premise seems obviously correct upon first consideration, a lot of the conclusions that this style of thinking ends up endorsing seem horrifically immoral. A hard-line utilitarian approach to ethics yields prescriptions for actions that are highly unintuitive to me. Here’s one of the strongest intuition-pumps I know of for why utilitarianism is wrong:

Suppose that there is a doctor that has decided to place one of his patients under anesthesia and then rape them. This doctor has never done anything like this before, and would never do anything like it again afterwards. He is incredibly careful to not leave any evidence, or any noticeable after-effects on the patient whatsoever (neither physical nor mental). In addition, he will forget that he ever did this soon after the patient leaves. In short, the world will be exactly the same one day down the line whether he rapes his patient or not. The only difference in terms of states of consciousness between the world in which he commits the violation and the world in which he does not, will be a momentary pleasurable orgasm that the doctor will experience.

In front of you sits a button. If you press this button, then a nurse assistant will enter the room, preventing the doctor from being alone with the patient and thus preventing the rape. If you don’t, then the doctor will rape his patient just as he has planned. Whether or not you press the button has no other consequences on anybody, including yourself (e.g., if knowing that you hadn’t prevented the rape would make you feel bad, then you will instantly forget that you had anything to do with it immediately after pressing the button.)

Two questions:

1. Is it wrong for the doctor to commit the rape?

2. Should you press the button to stop the doctor?

The utilitarian is committed to answer ‘Yes’ to the first question and ‘No’ to the second.

As far as I can tell, there is no way out of this conclusion for Question 1. Question 2 allows a little more wiggle room; one might say that it is impossible that whether or not you press the button has no effect on your own mental state as you press it, unless you are completely without conscience. A follow-up question might then be whether you should temporarily disable your conscience, if you could, in order to neutralize the negative mental consequences of pressing the button. Again, the utilitarian seems to give the wrong answer.

This thought experiment is pushing on our intuitions about autonomy and consent, which are only considered as instrumentally valuable by the utilitarian, rather than intrinsically so. If you feel pretty icky about utilitarianism right now, then, well… I said it was the strongest anti-utilitarian intuition pump I know.

With that said, how can we formalize a system of ethics that takes into account not just happiness, but also the intrinsic importance of things like autonomy and consent? As far as I’ve seen, every such attempt ends up looking really shabby and accepting unintuitive moral conclusions of its own. And among all of the ethical systems that I’ve seen, only utilitarianism does as good a job at capturing so many of my ethical intuitions in such a simple formalization.

So this is where I am at with utilitarianism. I intrinsically value a bunch of things besides happiness. If I am simply engaging in the purely descriptive project of ethics, then I am far from a utilitarian. But the more I systematize my ethical framework, the more utilitarian I become. If I heavily optimize for consistency, I end up a hard-line utilitarian, biting all of the nasty bullets in favor of the simplicity and generality of the utilitarian framework. I’m just not sure why I should spend so much mental effort systematizing my ethical framework.

This puts me in a strange position when it comes to actually making decisions in my life. While I don’t find myself in positions in which the utilitarian option is as horrifically immoral as in the thought experiment I’ve presented here, I still am sometimes in situations where maximizing net happiness looks like it involves behaving in ways that seem intuitively immoral. I tend to default towards the non-utilitarian option in these situations, but don’t have any great principled reason for doing so.